Nur Izzati Zainal and Nurul Farhana Jumaat

2026 VOL. 13, No. 1

Abstract: The emergence of Generative AI presents both opportunities and challenges, especially in technology enhanced learning (TEL) and open and distance learning (ODL). Guidance remains fragmented, with ethical safeguards, instructional design, and pedagogy often treated separately. This study identifies key indicators and synthesises integration of the Generative AI Integrated Framework for Education, GAIIFE, structured around three interdependent pillars: Ethical Considerations (EC), Instructional Development (ID), and Pedagogical Approach (PA). Using conceptual framework development to derive key dimensions from 17 papers, followed by a simplified Qualitative Comparative Analysis (QCA), two contrasting frameworks were compared to identify which dimensions were present or absent. One framework emphasises ethics, the other instructional design, and both omit pedagogical grounding. The Generative AI Integrated Framework for Education (GAIIFE) integrates ethical integrity, instructional alignment, and learner centred pedagogy to guide responsible Generative AI integration across educational contexts, with relevance for TEL and ODL. The framework contributes to equitable education by offering a practical guide for responsible Generative AI integration.

Keywords: generative AI, conceptual framework, technology enhanced learning, open and distance learning, ethical considerations, instructional development, pedagogical approach, qualitative comparative analysis

In recent years, the integration of digital technologies in education has received growing international attention and is increasingly regarded as a social necessity for preparing learners to navigate a more complex global environment (UNESCO, 2024). This integration has expanded opportunities to improve learning equity, access, and quality, especially through technology enhanced learning (TEL) and open and distance learning (ODL). Within this evolving landscape, Generative Artificial Intelligence (AI) has emerged as a major development, reshaping how learning is designed, delivered, and supported.

Generative AI offers opportunities for personalised learning, automated feedback, and scalable educational content development, which can be valuable for learners with limited access to traditional support. At the same time, institutional adoption has raised serious ethical and governance concerns, particularly around academic integrity and responsible use (Farrelly & Baker, 2023). Copyleaks (2024) reported that AI generated academic submissions increased from 11.92% to 20.98% within one year, indicating growing pressure on originality and assessment standards.

Previous research by Farrelly and Baker (2023) highlights the risks of uncritical reliance on AI detection tools and emphasises fairness and AI literacy as key safeguards, particularly to reduce harmful false accusations against marginalised learners. Chan (2023) addresses governance through institutional policy responses, while Tseng and Warschauer (2023) focus on how classroom pedagogy can adapt to Generative AI rather than resist it. Taken together, these studies reflect attention to ethical safeguards, policy responses, and classroom integration, yet the guidance remains fragmented because these contributions operate at different levels and are rarely integrated into a single, actionable approach.

Some work emphasises ethical governance and compliance (Lauterbach, 2019), while other work focuses on instructional efficiency and content design (Nguyen et al., 2023). In many cases, pedagogical grounding is treated as secondary or is assumed rather than made explicit (Radday & Mervis, 2024). This fragmentation is a practical problem in TEL and ODL contexts, where educators need clear and actionable guidance to ensure that Generative AI supports learning quality while protecting equity, integrity, and meaningful student engagement. In other words, responsible integration requires more than ethical rules or tool guidelines. It requires an integrated view that connects instructional development decisions with ethical considerations and pedagogical intent.

For this reason, this study proposes three essential dimensions for Generative AI integration in education. Instructional Development (ID) refers to how learning tasks, supports, and assessments are designed for purposeful AI use. Ethical Considerations (EC) refer to how integrity, privacy, transparency, and fairness are addressed in everyday learning practice. Pedagogical Approach (PA) refers to how Generative AI use aligns with learning theory and student-centred instructional goals. In this study, these three dimensions function as the core analytic constructs used to synthesise evidence across the literature and to develop GAIIFE, an integrated conceptual framework for TEL and ODL contexts.

This study aims to develop an integrated conceptual framework for responsible Generative AI integration in education, with particular attention to technology enhanced learning and open and distance learning contexts. The research objectives were: a) RO1: To identify key indicators and integration gaps across Instructional Development, Ethical Considerations, and Pedagogical Approach in existing Generative AI integration approaches b) RO2: To synthesise these findings into an integrated conceptual framework that supports responsible Generative AI integration in TEL and ODL

This study used a qualitative conceptual methodology to develop an integrative framework for Generative AI adoption in education. It combined an integrative literature review with a structured cross framework comparison to synthesise three dimensions, instructional development, ethical considerations, and pedagogical approach. The outcome is Generative AI Integrated Framework for Education (GAIIFE), a consolidated conceptual model intended to guide responsible and instructionally grounded use of Generative AI in teaching and learning.

This study adopted a conceptual framework development methodology, as outlined by Torraco (2005), to address the under-theorised integration of Generative AI in education. Conceptual research, in this context, involves building new theoretical models by synthesising diverse literature and identifying gaps across current frameworks. The resulting framework, the Generative AI Integrated Framework for Education (GAIIFE), integrates ethical, pedagogical, and instructional dimensions to guide responsible AI adoption in teaching and learning.

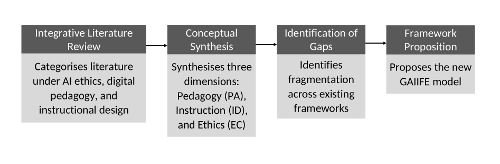

Figure 1 illustrates the four-stage conceptual framework development process applied in this study, adapted from Torraco (2005).

As shown in Figure 1, GAIIFE was developed through a four-stage conceptual process: conducting an integrative literature review, synthesising findings into three dimensions (PA, ID, EC), identifying integration gaps in existing frameworks, and proposing GAIIFE to unify these dimensions into a cohesive model.

To synthesise the three analytical dimensions and develop GAIIFE, this study used two data sources. The first source supported the synthesis of Instructional Development (ID), Ethical Considerations (EC), and Pedagogical Approach (PA) across prior Generative AI education literature. The second source supported the focused qualitative comparative analysis (QCA) comparison of two influential conceptual frameworks.

Data Source for Synthesising ID, PA and EC

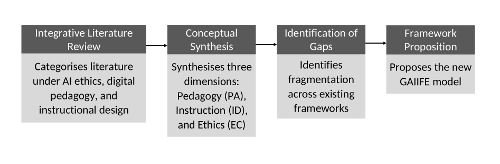

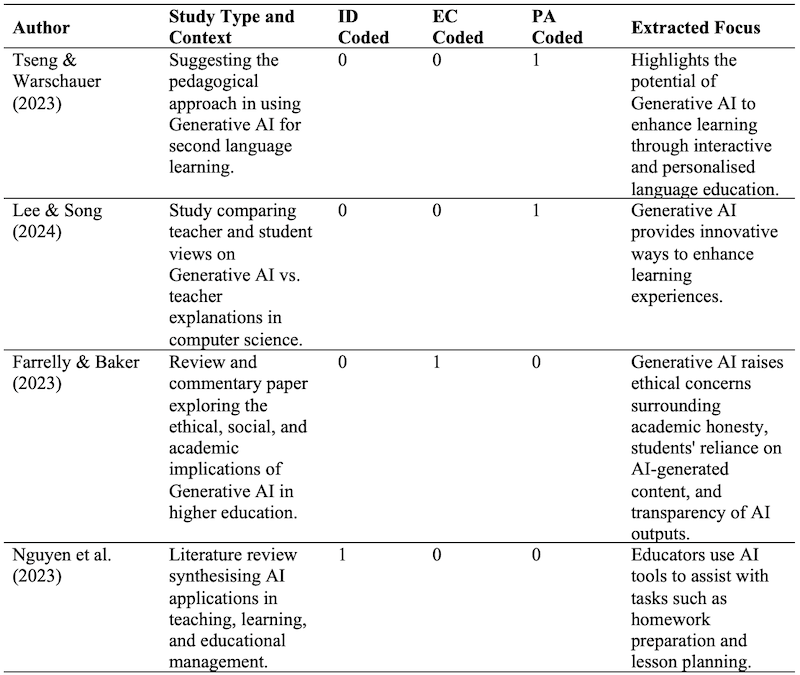

For the synthesis stage, this study used a targeted purposive set of 17 peer reviewed studies on Generative AI integration in education. The aim was conceptual coverage across instructional development, ethical considerations, and pedagogical approaches. Studies were included when they discussed Generative AI use in teaching and learning and provided sufficient conceptual detail to support coding into at least one of the three dimensions. Studies were excluded when they focused mainly on technical model performance, did not address educational integration, or provided only brief mentions without actionable concepts. The set was identified through iterative searching followed by title and abstract screening for relevance. Each included study was then reviewed and coded against ID, EC, and PA, and the coded outputs were summarised in Table 1 to inform the indicators used in this study.

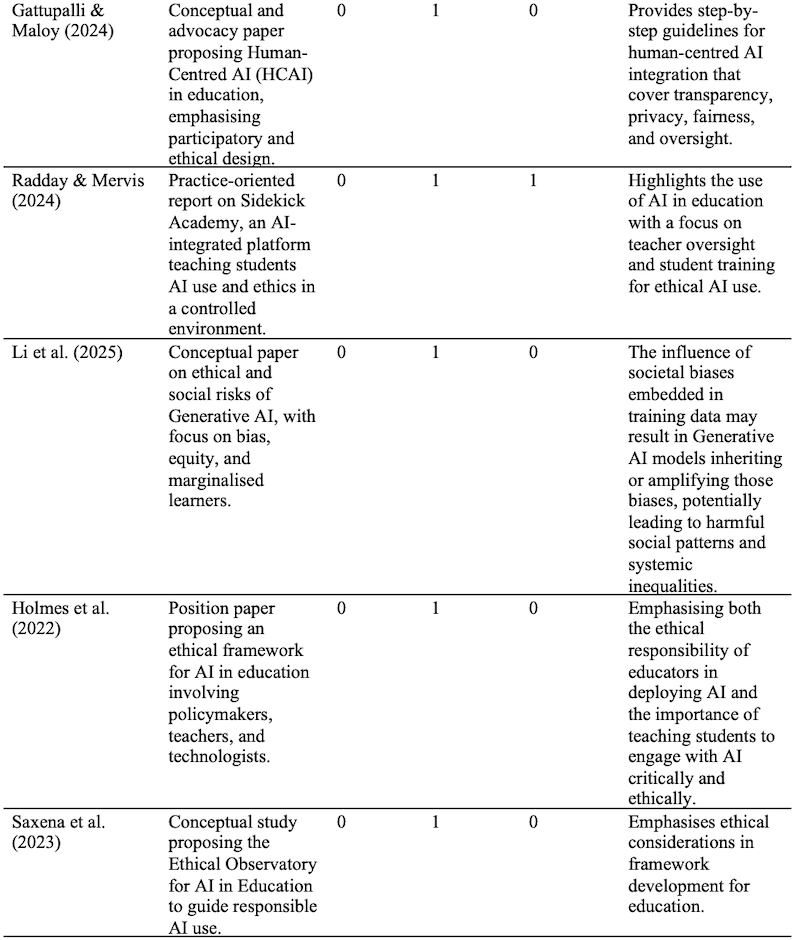

Table 1: Mapping of Purposively Selected Studies to the Three Synthesis Dimensions ID, EC, and PA

Note: 1 means the study provides clear guidance or substantive discussion that can be mapped to the dimension; 0 means the dimension is absent or only mentioned briefly without usable indicators.

Data Source for Qualitative Comparative Analysis

For the qualitative comparative analysis (QCA) stage, the unit of analysis was not individual studies but conceptual frameworks. Therefore, this part draws on two recent frameworks published in 2023 and 2024 that represent contrasting emphases in the current discourse. The Ethical Observatory for AI in Education focuses on ethical governance and responsible AI principles (Saxena et al., 2023), while GAIDE focuses on Generative AI supported instructional development and content design (Dickey & Bejarano, 2024). These two frameworks were selected because they are well cited and provide sufficient detail for binary calibration against the three conditions used in this study which are ID, EC, and PA.

Qualitative Comparative Analysis (QCA) is a research method used to compare how different combinations of conditions lead to specific outcomes (Ragin, 2014). Unlike traditional statistical methods that require large datasets, QCA is ideal for studies with a small number of cases. It helps identify which conditions, or combinations of conditions are necessary or sufficient for an outcome to occur.

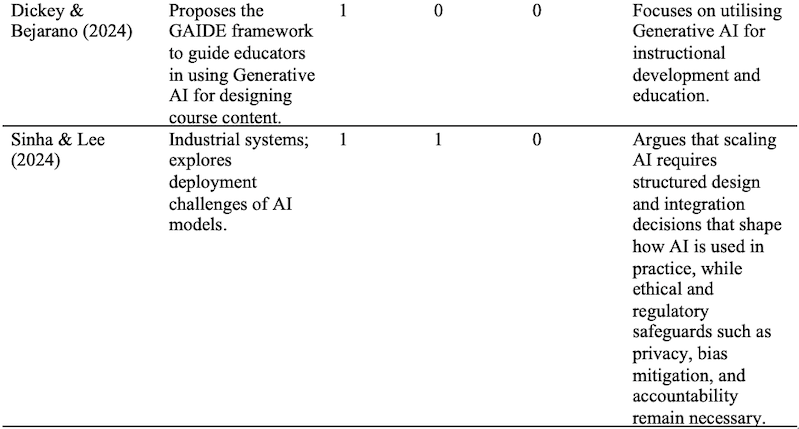

QCA uses Boolean logic, a type of reasoning that applies simple logical operators such as AND, OR, and NOT to analyse data. In this study, QCA was used to determine how three key elements (Instructional Development (ID), Ethical Considerations (EC), and Pedagogical Approach (PA)) were addressed in two existing frameworks. The goal was to identify which elements were included, which were missing, and how these comparisons can guide the development of a more balanced model: the Generative AI Integrated Framework for Education (GAIIFE). The five stages of the QCA process adopted in this study are illustrated in Figure 2.

In this study, QCA concepts were used in a simplified form to support structured comparison. Each framework was calibrated in binary form for the presence or absence of ID, EC, and PA, then compared to identify missing conditions and underrepresented combinations. The comparison informed the synthesis of GAIIFE by ensuring all three dimensions were addressed in one integrated framework.

Building on the data source and selection process described previously with the summary presented in Table 1, this section reports the results of the conceptual synthesis. The purpose of this section is to answer RQ1 by identifying the indicators of Instructional Development (ID), Ethical Considerations (EC), and Pedagogical Approach (PA) that are evident across the reviewed studies and frameworks.

Instructional Development IndicatorsI

Instructional Development (ID) is characterised by indicators that describe how Generative AI is incorporated into the design of learning activities and teaching support. Two recurring indicators were observed. First, several studies frame Generative AI as a tool that supports educator workflow and instructional preparation, including planning and developing learning materials and resources (Dickey & Bejarano, 2024; Nguyen et al., 2023). Second, studies indicate a readiness and guidance gap, where staff report uncertainty about best practice and a lack of clear instructional direction for meaningful classroom integration (Lee et al., 2024). These indicators show that instructional attention often centres on efficiency and support for teaching tasks, with less explicit focus on structured learning task design beyond content generation.

Ethical Considerations Indicators

Ethical Considerations (EC) are characterised by indicators related to integrity, fairness, and governance for responsible Generative AI use. A dominant indicator concerns academic integrity and fairness safeguards, including risks associated with overreliance on AI detection tools and the potential harm of false accusations, especially for marginalised learners (Farrelly & Baker, 2023). A second indicator involves bias and equity risk, where studies highlight how embedded social biases may disadvantage learners and require inclusive mitigation strategies (Li et al., 2025). A third indicator focuses on transparency, accountability, and ethical oversight responsibilities for institutions and stakeholders (Holmes et al., 2022; Saxena et al., 2023). A fourth indicator reflects policy and governance responses, where institutional frameworks and policy toolkits are proposed to guide responsible adoption (Chan, 2023; Mouta et al., 2024). Overall, EC are strongly represented in the reviewed literature and are often framed through principles and governance mechanisms.

Pedagogical Approach Indicators

Pedagogical Approach (PA) is characterised by indicators that relate Generative AI use to teaching practice and learner-centred learning processes. One indicator reflects classroom level pedagogical guidance that positions Generative AI as a learning support and encourages adaptation of teaching practice rather than resistance to AI use (Tseng & Warschauer, 2023). Another indicator involves supervised and structured classroom use that includes teacher oversight and learner preparation for responsible engagement with Generative AI (Radday & Mervis, 2024). Although some sources report that Generative AI can enhance learning experiences and engagement, these discussions are often presented as practical opportunities without explicit grounding in learning theory or clear pedagogical mechanisms (Lee & Song, 2024). This indicates that PA is less consistently articulated than EC and is more often described at the level of classroom practice than theory explicit models.

Synthesising the Key Indicators

The reviewed studies and frameworks indicate that Ethical Considerations (EC) are most consistently represented through safeguards, bias and equity risk, transparency and accountability, and governance indicators (Chan, 2023; Farrelly & Baker, 2023; Holmes et al., 2022; Li et al., 2025; Mouta et al., 2024; Saxena et al., 2023). Instructional Development (ID) was mainly represented through educator workflow support and reported gaps in instructional guidance and readiness (Dickey & Bejarano, 2024; Lee et al., 2024; Nguyen et al., 2023). Pedagogical Approach (PA) was least consistently represented and was most often expressed through classroom practice guidance and teacher mediated use rather than explicit theory-driven pedagogical framing (Lee & Song, 2024; Radday & Mervis, 2024; Tseng & Warschauer, 2023).

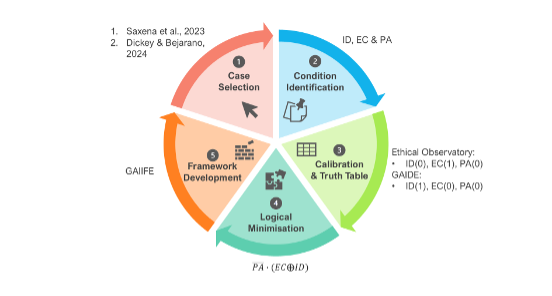

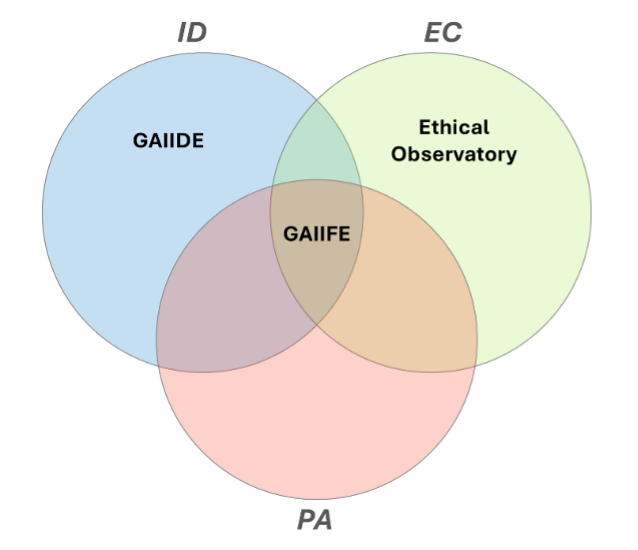

To answer RQ2, the three dimensions were compared across the reviewed studies and frameworks using the binary coding reported in Table 1. An integration gap is defined here as the absence of co-occurrence between dimensions, or the presence of one dimension without the supporting elements required for coherent classroom enactment. To provide an overview of how these dimensions co-occur in the literature, Figure 3 visualises the overlap patterns based on the binary coding in Table 1.

Figure 3 shows that EC was most frequently represented, with 10 studies addressing EC alone. In contrast, two studies addressed ID alone and two studies addressed PA alone. Only three studies demonstrated partial integration across dimensions, with one study combining EC and ID, one combining EC and PA, and one combining ID and PA. Overall, the evidence indicates a fragmentation gap, where ethical guidance was often not translated into instructional structures or pedagogical enactment, and design focused studies rarely integrated ethical integrity and learning theory in a single approach. This pattern motivates the development of a unified framework that integrates ethical integrity, instructional design, and pedagogical grounding for responsible generative AI use in education.

This section answers RQ3 by examining whether existing frameworks integrate Instructional Development (ID), Ethical Considerations (EC), and Pedagogical Approach (PA) within a single approach. The unit of analysis was the framework. Two contrasting frameworks were selected to make the presence or absence of each dimension visible through a simple binary configuration.

Case Selection and Condition Identification

The Ethical Observatory for AI in Education focused on ethical governance and responsible AI principles (Saxena et al., 2023), while GAIDE focuses on Generative AI supported instructional development and content design (Dickey & Bejarano, 2024). Each framework was coded for the presence (1) or absence (0) of ID, EC, and PA based on whether it provided substantive, actionable guidance that mapped to each dimension.

Calibration Results and Configurations

The Ethical Observatory demonstrated a strong emphasis on EC but provided limited guidance for ID and did not articulate PA, yielding the configuration:

![]()

GAIDE emphasised ID for AI assisted content and activity design but did not address EC and did not ground AI use in an explicit PA, yielding the configuration:

![]()

These configurations show that each framework addresses only one of the three dimensions, and both omit PA.

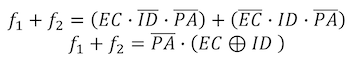

Logical Minimisation (Boolean Reduction)

To express this pattern compactly, the two combined configurations can be represented as:

Combining them yields a shared structural limitation. Both frameworks exclude PA, while EC and ID appear in an either/or pattern rather than as an integrated set. This result indicates that pedagogical grounding was absent across both frameworks, while ethical and instructional strengths appeared separately and did not co-occur within a single model.

Visual Interpretation and Implication for GAIIFE

Figure 4 reinforces this finding. GAIDE occupies the ID space, the Ethical Observatory occupies the EC space, and the PA dimension remains unoccupied by either framework. GAIIFE is therefore positioned at the intersection of ID, EC, and PA to address this fragmentation by linking ethical guardrails, instructional task and assessment design, and pedagogical grounding within one coherent structure.

Framework Development: Synthesising GAIIFE

Based on the comparison and analysis in the previous sections, this part of the study focuses on developing the Generative AI Integrated Framework for Education (GAIIFE). The findings showed that neither GAIDE nor the Ethical Observatory framework fully supported all the important areas needed for responsible and effective use of Generative AI in education. One focused on instruction, the other on ethics but both missed the pedagogical approach elements. GAIIFE was designed to bring these missing parts together. It combines three important areas consisting of Instructional Development (ID), Ethical Considerations (EC), and Pedagogical Approach (PA), into one complete framework.

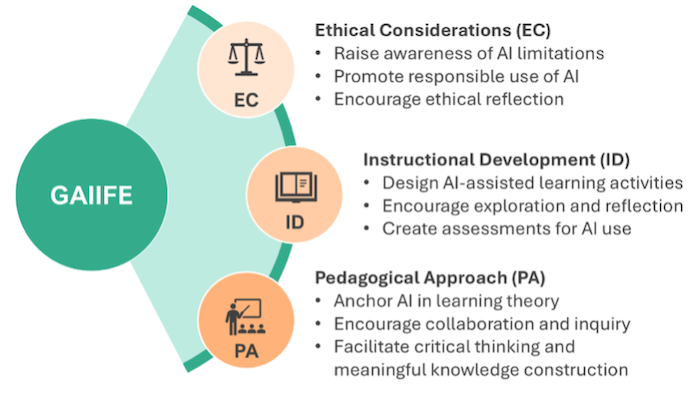

Building on RQ1 and the gaps identified in RQ2 and RQ3, this section presents the Generative AI Integrated Framework for Education, GAIIFE. GAIIFE provides an integrated structure for balanced Generative AI integration in technology enhanced learning (TEL) and open and distance learning (ODL). It combines three dimensions that frequently appear in the literature but are rarely integrated within one approach, namely Ethical Considerations (EC), Instructional Development (ID), and Pedagogical Approach (PA). Figure 5 presents these dimensions as interdependent pillars for planning and implementing Generative AI supported learning.

GAIIFE guides aligned decisions across ethics, instruction, and pedagogy. Responsible integration requires coordinated design, where ethical safeguards shape instructional choices, instructional design operationalises pedagogical intent, and pedagogy defines the role and limits of Generative AI in learning activities. This alignment is especially important in technology enhanced learning (TEL) and open and distance learning (ODL) where learning support is mediated by digital systems and educator oversight is often reduced.

Ethical Considerations Pillar

EC ensures responsible and transparent Generative AI use by addressing risks that affect learning credibility and trust. It is operationalised through three indicators: awareness of AI limitations, responsible use in learning and assessment, and ethical reflection on appropriateness and context sensitivity. EC functions as a guardrail for how Generative AI is introduced, used, and evaluated.

Instructional Development Pillar

ID focuses on designing tasks, supports, and assessment for purposeful Generative AI use. It is operationalised through three indicators: structured AI assisted learning activities with clear purpose and boundaries, exploration and reflection steps that require critique and refinement, and assessment criteria that evaluate both outcomes and how learners used and verified Generative AI outputs. ID translates ethical and pedagogical requirements into practical design decisions.

Pedagogical Approach Pillar

PA grounds Generative AI integration in learning theory and student-centred goals to avoid convenience led adoption that prioritises tool use over learning. It is operationalised through three indicators: explicit learning theory alignment, collaboration and inquiry supported by Generative AI, and critical thinking with meaningful knowledge construction through interpretation, evaluation, and application. This pillar is central in TEL and ODL because learners often work independently and require clear scaffolding to sustain engagement.

This study addresses a practical challenge in technology enhanced learning (TEL) and open and distance learning (ODL), where Generative AI adoption is expanding but guidance remains fragmented across ethics, policy, pedagogy and instructional practice (Chan, 2023; Holmes et al., 2022; Nguyen et al., 2023). The synthesis and framework comparison indicate an uneven distribution across the three analytical dimensions. Ethical Considerations (EC) were most consistently addressed; Instructional Development (ID) was commonly framed around workflow efficiency and content generation; and Pedagogical Approach (PA) was least consistently articulated (Dickey & Bejarano, 2024; Saxena et al., 2023; Tseng & Warschauer, 2023). This imbalance is consequential because TEL and ODL require scalable guidance that protects equity and academic integrity while also supporting meaningful engagement and learning quality (Holmes et al., 2022; Li et al., 2025).

Across the reviewed literature, ethical and policy discussions emphasised integrity, fairness, transparency, accountability, and bias risks but these principles were often expressed at a governance level and were not consistently translated into everyday instructional design decisions (Lauterbach, 2019; van Noordt & Tangi, 2023; Saxena et al., 2023). This translation gap was amplified in ODL contexts, where reduced real-time oversight and assessment exposure heightened the risk of misuse and uncritical acceptance of AI generated outputs (Chan, 2023; Holmes et al., 2022). In contrast, instructional guidance frequently focuses on tool use and content production support, with less attention to structuring tasks that require inquiry, verification, and reflection, which can shift learning toward output completion rather than reasoning quality (Dickey & Bejarano, 2024; Nguyen et al., 2023; Sinha & Lee, 2024). Pedagogical grounding was often implied rather than specified as theory informed instructional roles or design principles, limiting educators’ ability to implement consistent scaffolds for higher order thinking and sustained engagement in scalable learning environments (Lee & Song, 2024; Radday & Mervis, 2024; Tseng & Warschauer, 2023). Overall, these findings support the need for an integrated model, and they justify GAIIFE as a framework that aligns ethical safeguards, instructional design decisions, and pedagogical intent as interdependent requirements for responsible Generative AI integration (Holmes et al., 2022; Saxena et al., 2023).

This section presents the illustrative case on how GAIIFE can be operationalised in learning.

Scenario 1: Integrating a Pre-existing AI Tool in a Mathematics ODL Course

In this illustrative case, educators use an existing Generative AI tool, such as ChatGPT, to support asynchronous mathematics learning in an ODL setting where learners were geographically dispersed and instructor support was limited. GAIIFE guides ethical integration through data privacy protocols, academic honesty guidelines, and AI literacy activities that encourage students to reflect on AI limitations. Instructionally, the tool is embedded in learning modules that include problem-solving exercises, feedback loops, and self-assessment opportunities. Pedagogically, it supports models like flipped learning and problem-based learning by positioning Generative AI as a dialogic partner rather than a solution provider. This approach supports equitable access for geographically dispersed learners while maintaining a focus on reasoning and verification.

Scenario 2: Custom Built Generative AI Tool in a Python Programming Course

In this illustrative case, educators deployed a custom-built Generative AI tool to support a Python 3 programming course in a TEL context, where students learned through a coding environment, digital labs, and structured learning tasks delivered through a learning management system. GAIIFE guides ethical integration through standardised tool access for all learners, privacy safeguards for submitted code and prompts, transparency cues that signal AI limitations, and responsible use reminders aligned with academic integrity expectations. Instructionally, the tool was embedded into technology-mediated programming activities with clear checkpoints such as explaining code logic, justifying programme structure, documenting test cases, and reflecting on debugging decisions, supported by guided prompts and conceptual clarifications. Pedagogically, it supported experiential learning by structuring iterative cycles of coding, testing, feedback, and reflection within the digital environment, positioning Generative AI as a learning partner rather than an automatic code generator. This TEL-oriented design reduced confusion from mixed Python versions while sustaining a focus on reasoning, verification, and practical skill development.

Summary of Scenario Implications

Across both scenarios, the main contribution was showing how ethical safeguards, instructional design choices, and pedagogical intent could be operationalised together rather than treated separately. This integration is especially relevant in TEL and ODL settings where scalable guidance is needed to protect integrity and equity while sustaining meaningful engagement.

The findings have practical implications for TEL and ODL stakeholders, especially where equity and access are priorities. Educators should design Generative AI use as a learning process rather than an answer shortcut by requiring justification, evidence citation, and reflection on limitations and bias. Learning tasks can incorporate drafting, critique, revision, and comparison with course resources, positioning AI as a dialogic partner that prompts explanation and reasoning.

Instructional designers should develop reusable patterns that scale, such as structured prompting templates, verification checklists, reflection prompts, and rubrics that reward evidence and reasoning over polished output. AI literacy micro activities should be embedded to help learners recognise risks such as hallucination and bias and apply responsible use in practice.

Institutional leaders should complement policy with educator capacity building, assessment guidance, and equity sensitive safeguards. In ODL settings, institutions should ensure access to approved tools, clear disclosure expectations, and integrity support that is fair and consistent.

The work is conceptual and relies on purposive selection, so it does not claim comprehensive coverage of all existing frameworks. The QCA element includes only two frameworks. This choice makes the configurational comparison easier to interpret but it narrows the evidence base. The scenarios are included to illustrate potential use, not to report evaluated implementations. Empirical studies are therefore needed to test feasibility and learning impact, particularly in TEL and ODL settings.

Future work should empirically validate GAIIFE across disciplines and delivery modes, with special attention to TEL and ODL where learner support conditions differ from face-to-face settings. Design-based research could trial GAIIFE informed learning activities and refine the indicators into practical implementation tools such as rubrics, checklists, and staff development modules. Comparative studies can also examine equity outcomes, including how different learner groups experience AI-supported learning and how fairness safeguards perform in practice. Longitudinal research is also needed because Generative AI capabilities and risks evolve quickly, affecting what responsible integration requires over time.

In conclusion, the findings indicate that current approaches to Generative AI in education tend to emphasise either ethical governance or instructional efficiency, while pedagogical grounding is frequently underspecified. GAIIFE responds to this fragmentation by integrating Ethical Considerations (EC), Instructional Development (ID), and Pedagogical Approach (PA) into a single framework suited to TEL and ODL. By supporting balanced integration, the framework offers a practical foundation for institutions seeking to expand access and innovation while protecting equity, integrity, and meaningful student engagement.

AI Usage Statement: Generative AI tools (e.g., ChatGPT) were used during the manuscript preparation process to assist with clarity, grammar, and sentence structure. The authors retain full responsibility for the originality, accuracy, and integrity of the content.

Acknowledgement: This research was fully funded by Universiti Teknologi Malaysia (UTM), (Vot. No. Q.J130000.3853.23H80). The authors would like to express their sincere gratitude to UTM for providing the financial support to carry out this study.

Ahmed, M.H. (2023). Forging ahead with technology enhanced language learning with requisite guardrails. In A. Irgatoğlu & C. Yazgı (Eds.), AELTE 2023 Digital Era in Foreign Language Education Conference Proceedings (pp. 1-16).

Chan, C.K.Y. (2023). A comprehensive AI policy education framework for university teaching and learning. International Journal of Educational Technology in Higher Education, 20(1), 38. https://doi.org/10.1186/s41239-023-00408-3

Copyleaks. (2024, March 17). What impact has AI had on education? https://copyleaks.com/blog/one-year-later-chatgpt-and-education

Dickey, E., & Bejarano, A. (2024). GAIDE: A framework for using generative AI to assist in course content development. 2024 IEEE Frontiers in Education Conference (FIE), 1-9. https://doi.org/10.1109/FIE61694.2024.10893132

Farrelly, T., & Baker, N. (2023). Generative Artificial Intelligence: Implications and considerations for higher education practice. Education Sciences, 13(11), 1109. https://doi.org/10.3390/educsci13111109

Gattupalli, S., & Maloy, R.W. (2024). On human-centered AI in education. University of Massachusetts, Amherst. https://scholarworks.umass.edu/server/api/core/bitstreams/8094b753-46d3-43bc-8b97-ae736c680247/content

Holmes, W., Porayska-Pomsta, K., Holstein, K., Sutherland, E., Baker, T., Shum, S.B., Santos, O.C., Rodrigo, M., Cukurova, M., Bittencourt, I.I., & Koedinger, K.R. (2022). Ethics of AI in education: Towards a community-wide framework. International Journal of Artificial Intelligence in Education, 32(3), 504-526. https://doi.org/10.1007/s40593-021-00239-1

Lauterbach, A. (2019). Artificial Intelligence and policy: Quo vadis? Digital Policy, Regulation and Governance, 21(3), 238-263. https://doi.org/10.1108/DPRG-09-2018-0054

Lee, D., Arnold, M., Srivastava, A., Plastow, K., Strelan, P., Ploeckl, F., Lekkas, D., & Palmer, E. (2024). The impact of generative AI on higher education learning and teaching: A study of educators’ perspectives. Computers and Education: Artificial Intelligence, 6, 100221. https://doi.org/10.1016/j.caeai.2024.100221

Lee, S., & Song, K-S. (2024). Teachers’ and students’ perceptions of AI-generated concept explanations: Implications for integrating generative AI in computer science education. Computers and Education: Artificial Intelligence, 7, 100283. https://doi.org/10.1016/j.caeai.2024.100283

Li, M., Enkhtur, A., Yamamoto, B.A., Cheng, F., & Chen, L. (2025). Potential societal biases of ChatGPT in higher education: A scoping review. Open Praxis, 17(1), 79-94. https://doi.org/10.55982/openpraxis.17.1.750

Mouta, A., Torrecilla-Sánchez, E.M., & Pinto-Llorente, A.M. (2024). Design of a future scenarios toolkit for an ethical implementation of artificial intelligence in education. Education and Information Technologies, 29(9), 10473-10498. https://doi.org/10.1007/s10639-023-12229-y

Nguyen, T.T.K., Tran, H.T., & Nguyen, M.T. (2023). Artificial Intelligence (AI) in teaching and learning: A comprehensive review. In A. Kaban & A. Stachowicz Stanusch (Eds.), Empowering education: Exploring the potential of artificial intelligence (pp. 140-154). ISTES Organization.

Radday, E., & Mervis, M. (2024). AI, Ethics, and education: The pioneering path of sidekick academy. Proceedings of the AAAI Conference on Artificial Intelligence, 38(21), 23294-23299. https://doi.org/10.1609/aaai.v38i21.30377

Ragin, C.C. (2014). The comparative method: Moving beyond qualitative and quantitative strategies : With a new introduction. University of California Press.

Saxena, A.K., García, V., Amin, M.R., Salazar, J.M.R., & Dey, S. (2023). Structure, objectives, and operational framework for ethical integration of artificial intelligence in education. Sage Science Review of Educational Technology, 6(1), 88-100.

Sinha, S., & Lee, Y.M. (2024). Challenges with developing and deploying AI models and applications in industrial systems. Discover Artificial Intelligence, 4(1), 55. https://doi.org/10.1007/s44163-024-00151-2

Torraco, R.J. (2005). Theory development research methods. In R.A. Swanson & E.F. Holton III (Eds.), Research in organizations: Foundations and methods of inquiry (1st ed.) (pp. 351-374). Berrett-Koehler Publishers, Inc.

Tseng, W., & Warschauer, M. (2023). AI-writing tools in education: If you can’t beat them, join them. Journal of China Computer-Assisted Language Learning, 3(2), 258-262. https://doi.org/10.1515/jccall-2023-0008

UNESCO. (2024). What you need to know about digital learning and transformation of education. https://www.unesco.org/en/digital-education/need-know?hub=84636

van Noordt, C., & Tangi, L. (2023). The dynamics of AI capability and its influence on public value creation of AI within public administration. Government Information Quarterly, 40(4), 101860. https://doi.org/10.1016/j.giq.2023.101860

Author Notes

Nur Izzati Zainal is a PhD candidate at Universiti Teknologi Malaysia (UTM), Faculty of Educational and Science Technology. Her current research focuses on generative Artificial Intelligence in education and digital learning innovation. She has been involved in various research and development projects, including biometric identification systems, health monitoring applications, automatic guided vehicles, and deep learning-based medical image classification. Her interdisciplinary background integrates engineering, Artificial Intelligence, and educational technology. Email: atizainal88@gmail.com (https://orcid.org/0009-0007-6398-5379)

Nurul Farhana Jumaat has served with the Faculty of Educational Sciences and Technology, Universiti Teknologi Malaysia since 2015. She is actively involved in research focusing on online learning, instructional technology, and technology enhanced learning. She is an editorial board member of the Malaysian Journal of Social Sciences and Humanities. Email: nfarhana@utm.my (https://orcid.org/0000-0002-4606-489X)

Cite as: Zainal, N.I., & Jumaat, N.F. (2026). A conceptual framework for integrating generative AI in education through ethical, instructional, and pedagogical balance. Journal of Learning for Development, 13(1), 130-146.