Lilian Anthonysamy, Victor Alasa and Sofia Ali

2025 VOL. 12, No. 2

Abstract: The shift to hybrid learning during the Covid-19 pandemic emphasised the need for independent learning and effective digital resource management (RMS). This study, grounded in Social Cognitive Theory, examines how RMS supports students in managing digital distractions and optimising learning outcomes. Data from 275 randomly selected education students were analysed using structural equation modeling. Findings indicate that while time management was crucial, other factors like study environment and help-seeking did not directly influence intellectual capacity. Intellectual capacity played a mediating role, linking RMS with perceived learning outcomes. These insights highlight the significance of cognitive strategies in navigating technology-enabled education.

Keywords: Adaptive Strategies, Resource Management Strategies, Intellectual Capacity, perceived learning outcome, digital learning

The Covid-19 pandemic has transformed the landscape of higher education, accelerating the adoption of digital and hybrid learning environments. As students navigate these technologically mediated contexts, effective management of personal and academic resources becomes essential (Anthonysamy & Singh, 2022; Anthonysamy, 2022). Digital Resource Management Strategies (RMS)—including time management, help-seeking, effort regulation, and peer learning—are increasingly recognised as critical components of academic success (Anthonysamy, 2021).

However, many students struggle with applying RMS effectively, particularly in the digital domain where distractions are abundant and support systems less visible (Anthonysamy et al., 2020). While existing research suggests adopting coping mechanisms, like reducing screen time and engaging in meditation, it does not address the internal distractions individuals face. Previous research has established the importance of self-regulated learning (SRL) in blended environments (Broadbent & Poon, 2015), but few have critically examined how intellectual capacity mediates the effect of RMS in digital settings.

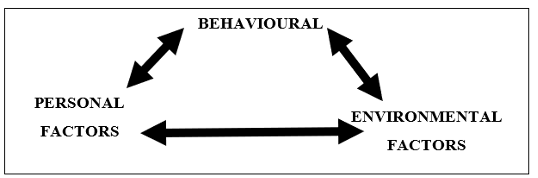

Grounded in Social Cognitive Theory (SCT), which emphasises the interplay of personal, behavioural, and environmental influences on learning (Bandura, 1986), this study examines how digital RMS impacts students’ perceived learning outcomes. SRL, an offshoot of SCT, identifies RMS as a central mechanism through which learners manage attention, motivation, and cognition in goal-directed learning (Zimmerman & Martinez-Pons, 1986).

While pre-pandemic research explored RMS and learning outcomes, there remains a gap in understanding this relationship within remote digital learning contexts (Naujoks et al., 2021). Additionally, the potential mediating role of intellectual capacity has received limited attention (Anthonysamy, 2021; Anthonysamy et al., 2020). This study seeks to deepen our understanding of RMS and perceived learning outcomes by examining the mediating effect of intellectual capacity. By investigating the extent to which RMS enhances intellectual capacity and student success, we aim to provide updated empirical evidence for educators and researchers.

This research addresses two research questions:

According to SCT (Figure 1), learning occurs in a social setting through the reciprocal interaction of the individual, the environment, and behaviour. In other words, this theory is concerned with both internal and external determinants. Albert Bandura's key work on why individuals choose specific human behaviours (Bandura, 1986) inspired SCT. According to SCT, changes in learning behaviour are influenced by personal variables, behaviour, and the environment. Personal impacts include a person's knowledge, cognitive views like metacognition, and affective elements like motivation and self-efficacy. The behavioural factor is how individuals respond to their performance and the environment, which includes external support such as their classmates and parents, the quality of education, feedback, and information access. In turn, the environment can impact or change a person's behaviour or knowledge. Social interaction among instructors, peers, or parents could also be part of the environment.

Drawing from SCT, RMS empower students to manage their learning environment and resources (Zimmerman & Martinez-Pons, 1986). These strategies include time management, environment structuring, effort regulation, peer learning, and help-seeking (Pintrich et al., 1991). Effective time management, a key component of RMS, involves planning, scheduling, and prioritising learning tasks (Effeney et al., 2013; Zimmerman & Martinez-Pons, 1986). This is especially crucial in digital learning, where students manage their own time and location (Hafizah et al., 2016). However, research suggests time management can be a challenge for students (Zhao et al., 2023; Hafizah et al., 2016).

The impact of the study environment on student learning is debated. While some studies found a link between the environment and cognitive abilities (Barrot et al., 2021), others suggest it has no significant effect (Spanjers et al., 2015). This research aims to contribute to this discussion by examining the relationship between these factors:

H1: Time and Study Environment (TSE) is positively related to students’ intellectual capacity (CA).

Drawing from Effeney et al. (2013), peer learning involves students collaborating (e.g., online study groups). While some research suggests peer learning enhances cognitive abilities (Krishnan & Hassan, 2021), others report mixed results (Hargreaves et al., 2022). Fear of online presentations and weaker online friendships might contribute to these inconsistencies (Hargreaves et al., 2022). This study aims to explore the impact of peer learning in a digital environment. Therefore, based on the discussion above, it is hypothesised that:

H2: Peer Learning (PL) is positively related to students’ intellectual capacity (CA).

Students seeking help from peers or instructors (help-seeking) is the most common RMS (Richardson et al., 2012). Studies show a positive link between help-seeking and academic performance, highlighting the importance of academic support (Amiri Gharghani et al., 2019; Anthonysamy et al., 2020). However, recent research suggests students struggle with help-seeking in online learning environments (Rasheed et al., 2020).

The discussion above justifies the need to test the following hypothesis:

H3: Help-Seeking (HS) is positively related to students’ intellectual capacity (CA).

Effort regulation is defined as an individual's ability to endure in the face of academic hurdles (Richardson et al., 2012). A student with effort regulation studies even when the learning material is boring or difficult or continues to explore specific software for an assignment even when it is complicated, or soldiers on to view online tutorials to learn how to complete a difficult academic task. In other words, effort regulation refers to how far a student goes to achieve a learning goal. When a learner is unable to understand the online information being studied, he or she will move back and forth to figure out the material. Because the usage of this method demonstrates the students' dedication to complete a goal, effort regulation may help students attain learning performance in digital learning. Furthermore, effort management could help students cope better with setbacks and failures in the digital learning environment. Chang (2005) determined that students' learning performance was determined by effort regulation. Although effort control is important for students' perceived learning results, Hafizah et al. (2016) noted that Malaysian undergraduates' effort regulation was poor, indicating that some Malaysian students put less effort into academic assignments. Furthermore, research from the literature indicates that students who engage in effortful learning activities improve their cognitive capacities as compared to those who put in less effort (van Gog et al., 2020; Cazan, 2020). Students who exhibit greater effort regulation promote deeper processing of learning information which contributes to their learning. Another study (Biwer et al., 2021) discovered that during the pandemic, many students were less able to regulate their effort and concentration than before the pandemic. This could imply that students were struggling to concentrate and cope with the new mode of learning, which was studying in isolation, because the load of the learning process had transferred to the student (Usher & Schunk, 2018). Thus, it is hypothesised that:

H4: Effort Regulation (ER) is positively related to students’ intellectual capacity (CA).

The Concept of Intellectual Capacity in the Context of Digital Learning

Gottfredson (1997) described intellectual capacity as a "mental capability that involves the ability to reason, plan, solve problems, think abstractly, comprehend complex ideas, learn quickly, and learn from experience" (Gottfredson, 1997, p. 13). Learners' ability to actively manage their learning environment and control relevant resources during learning necessitates the employment of resource management tools (Greene et al., 2014). As a result, students with good resource management abilities are able to use the resources around them to work toward a learning goal. In consequence, students learn how to create situations that may aid their cognitive ability (Pintrich & Garcia, 1991). According to reports, resource management tactics do influence students' cognitive abilities in digital learning.

The Role of Intellectual Capacity in Enhancing Students’ Perceived Learning Outcomes

Learning outcomes measure student achievement and programme effectiveness. Assessing these outcomes is crucial in digital learning environments. National accreditation bodies, like Malaysia's MQA, require programmes to define learning objectives in cognitive, emotional, and psychomotor domains (Bloom, 1956). Student grades are a common measure of cognitive learning but perceived learning outcomes, which focus on the student's learning experience, are also valuable for evaluating digital learning effectiveness (Eom et al., 2006). Studies suggest perceived learning outcomes might even be more important than instructor quality (Teng & Baum, 2013). Since digital learning encompasses the cognitive, affective, and psychomotor domains, measuring perceived learning outcomes requires a comprehensive approach (Whiting, 2011). Self-reporting instruments offer an effective way to capture these diverse learning experiences (NCEMS, 1994). While some research finds no direct link between intellectual capacity and perceived learning outcomes (Pagani et al., 2016), students' perceptions undoubtedly influence their success in online environments (Carver & Ksloski, 2015). This study explores the role of intellectual capacity in students' perceptions of learning outcomes in digital learning.

Based on the above, the following hypothesis is suggested: H5: Intellectual capacity (CA) is positively related to students’ perceived learning outcomes (PLO).

The purpose of this study was to investigate the possibility of intellectual capacity serving as a mediator between RMS and students' reported learning outcomes. As a result, the following hypotheses are proposed:

H6a: Intellectual capacity (CA) mediates the effect of Time and Study Environment (TSE) on students’ perceived learning outcomes (PLO).

H6b: Intellectual capacity (CA) mediates the effect of Peer Learning (PL) on students’ perceived learning outcomes (PLO).

H6c: Intellectual capacity (CA) mediates the effect of Help-Seeking (HS) on students’ perceived learning outcomes (PLO).

H6d: Intellectual capacity (CA) mediates the effect of Effort Regulation (ER) on students’ perceived learning outcomes (PLO).

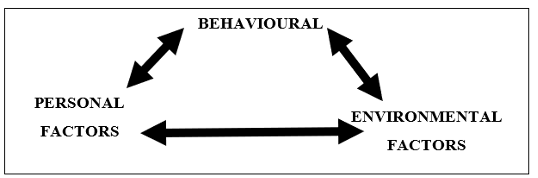

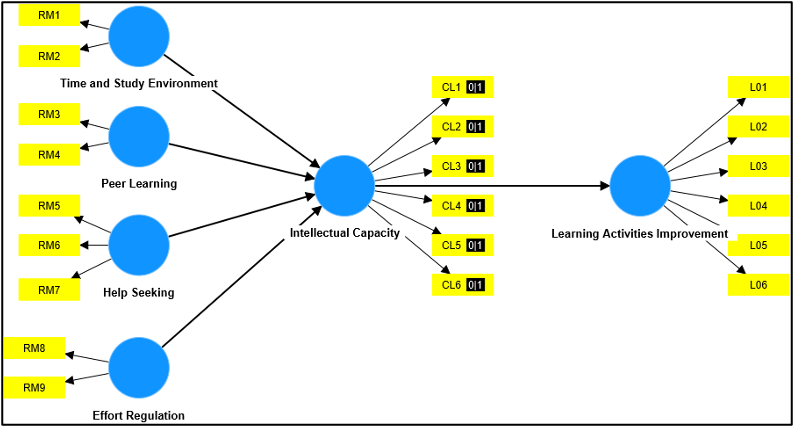

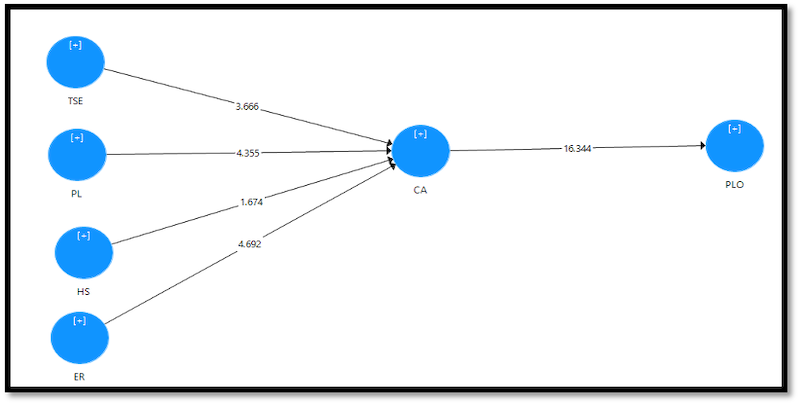

Figure 2 presents the research framework developed for this study.

An online survey was used for this research. This study included a sample of university students to investigate the impact of RMS on intellectual capacity and perceived learning outcomes in digital learning. The respondents for this study were drawn from a single public university in Fiji. A sample of students from the Education discipline was taken. Although the usage of RMS was not associated with specific courses or academic achievement (Loeffler et al., 2019), it would be interesting to investigate the use of RMS among a specific group of Education students (Anthonysamy, 2022).

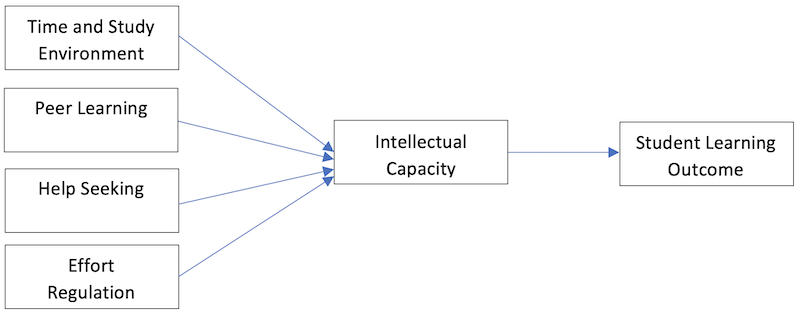

GPower Software (v3.1.9.2) was used to compute an appropriate sample size. Cohen’s (1988) criteria were followed, namely effect impact size (f2) = 0.15, = 0.05, and = 0.20 (Figure 3). According to the GPower analysis, the ideal sample size for the suggested framework was 129, indicating that the study's sample size of 275 was sufficient (see Figure 3). The survey was completed by 275 randomly selected university students.

Before beginning data collection, the researcher's institution obtained ethics approval, which was granted on May 20, 2022 (Approval Number: FNU-HREC-22). Respondents completed the questionnaire on the independent variables of RMS in digital learning and the dependent variables of intellectual capacity and perceived learning outcomes.

The instrument used in this study was adapted from validated instruments from several sources, including the Motivated Strategies for Learning Questionnaire (MSLQ) (Pintrich, et al., 1991) and the Cognitive Affective Psychomotor (CAP) Perceived Learning scale, which was used to measure perceived learning outcomes across the cognitive, affective, and psychomotor domains in higher education digital learning environments (Rovai et al., 2009). Intellectual capacity scales were also adapted from van Laar et al. (2017) and Ng (2012).

Brown (2010) recommended using a 5-point scale to measure the frequency of usage of resource management methods and intellectual capacity skills. The respondents were told to reply to each question on a 5-point Likert scale depending on frequency of usage, with the five points being 1 (Never), 2 (Rarely), 3 (Sometimes), 4 (Frequently), or 5 (Always) (Brown, 2010). In this study, a 5-point Likert scale was chosen to boost the response rate and quality while decreasing respondents' annoyance level because it would appear less confusing (Babakus & Mangold, 1992). A 7-point Likert scale was also avoided since the questions could be influenced by response style biases (Paulhus, 1991).

Brown (2010) employed a 6-point Likert scale to assess perceived learning outcomes: 1 (Strongly Disagree), 2 (Disagree), 3 (Slightly Disagree), 4 (Slightly Agree), 5 (Agree), and 6 (Strongly Agree). The decision to use a 6-point Likert scale rather than a 5-point or 7-point scale was made because the respondents had prior experience with distance online learning and, hence, should have had some views on their learning outcomes. Furthermore, Moser and Kalton (1972) stated that using a 7-point Likert scale might lead to respondents answering based on the mid-point, resulting in uninformative results.

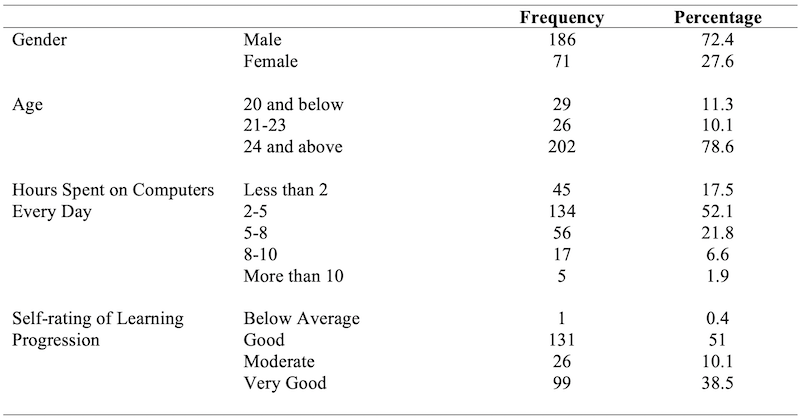

This study examined a single national university, with numerous campuses across Fiji. A total of 257 students completed the questionnaire. Table 1 shows the respondents' profiles.

Table 1: Demographic Profile of Respondents

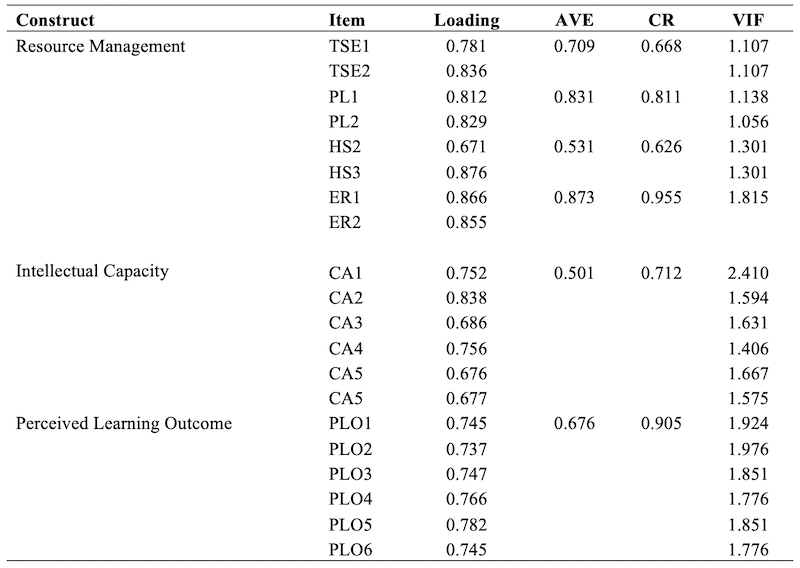

A measurement model describes the relationships between the constructs and the indicators. Before any decision can be made about the relationships between the constructs in the model, the measurement model must be assessed to ensure it is valid. The measurement model is a reflective assessment model that comprises internal consistency reliability, indicator reliability, convergent validity, and discriminant validity (Hair et al., 2017; Ramayah et al., 2018). Table 2 presents the assessment of internal consistency reliability using Composite Reliability (CR) and indicator reliability assessment through factor loading. In this study, all the constructs obtained satisfactory composite reliability values of between 0.7 and 0.9 (Hair et al., 2017; Ramayah et al., 2018), indicating high internal consistency reliability. This study considered an accepting factor loading of 0.6 and above (Byrne, 2016) as shown in Figure 4. Average Variance Extracted (AVE) was also used to measure convergent validity, where the acceptable AVE value was 0.5 or higher (Hair et al., 2019). Convergent validity ensures that items explain the construct well by examining whether the items in each construct are highly correlated and reliable.

Table 2: Indicator Reliability Analysis

Note: HS1 and CA6 were deleted due to low loadings

Note: TSE: Time and Study Environment, PL: Peer Learning, HS: Help-Seeking, ER: Effort Regulation, CA: Intellectual Capacity, PLO: Perceived Learning Outcome

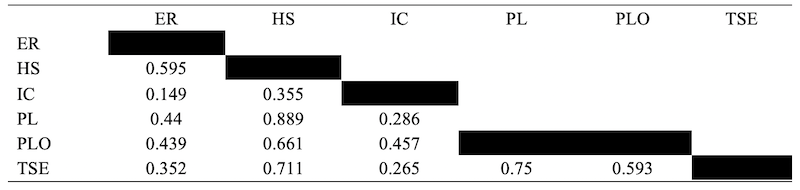

Although there are two approaches to HTMT ratios, the more common tool used is HTMT as a criterion where values greater than 0.85 (Kline, 2011) or greater than 0.90 (Gold et al., 2001) are deemed as an issue of discriminant validity. For this study, the HTMT values obtained, as can be observed, were all below 0.85 and 0.90, indicating that no issue of discriminant validity was found as shown in Table 3.

Table 3: Discriminant Validity

Note: TSE: Time and Study Environment, PL: Peer Learning, HS: Help-Seeking, ER: Effort Regulation, CA: Intellectual Capacity, PLO: Perceived Learning Outcome

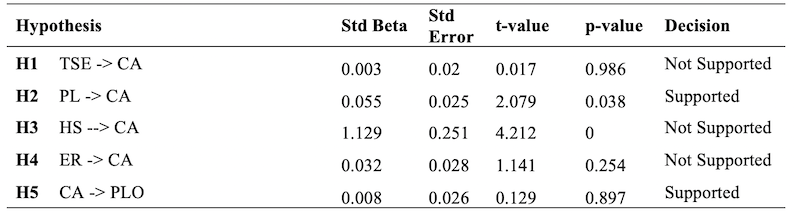

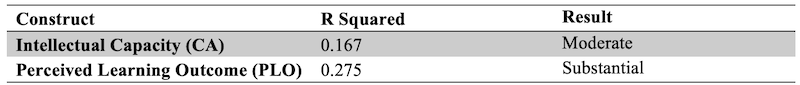

Since PLS-SEM is a non-parametric analysis that does not assume the distribution of data, bootstrapping is employed to help normalise data and to determine how significant a path between the constructs is (Hair et al., 2017). The path coefficient indicates the strength of the relationship between the latent variables. Bootstrapping produces both t-values and R2 values. T-values that measure the size and significance of the path coefficients are assessed based on the proposed framework. Bootstrapping does not require data to be normally distributed. Hair et al. (2017) suggested that 5,000 bootstrap samples are sufficient when the bootstrapping method is run in SmartPLS. Based on the recommendation, this study used 5,000 bootstrap samples. The results of the path coefficient assessment are presented in Table 4 and Figure 5. As observed in Table 5, the R2 value for intellectual capacity was 0.167, and the perceived learning outcome was 0.275. As suggested by Cohen (1988), the R2 values of 0.26, 0.13, and 0.02 were deemed substantial, moderate, and weak, respectively.

Table 4: Hypotheses Testing

Note: p < 0.05, TSE: Time and Study Environment, CA: Intellectual Capacity, PL: Peer Learning, HS: Help-Seeking, ER: Effort Regulation, PLO: Perceived Learning Outcome

Table 5: R-Squared (R2) Criterion

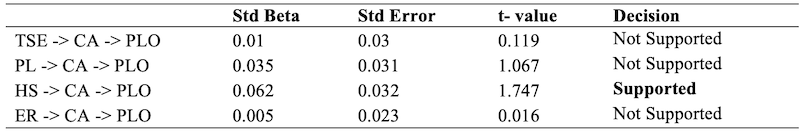

A mediator is an intervening variable that transmits the effect of an antecedent on the outcome (Baron & Kenny, 1986). Mediation is also known as an “indirect effect” (Preacher & Hayes, 2008). The indirect effect is the effect of an independent construct on a dependent construct through one or more intervening or mediating construct(s) supported by strong theoretical or conceptual support (Hair et al., 2017; Preacher & Hayes, 2008). This study used a bootstrapping approach to measure the indirect effect. In this study, the relationship between time and study environment, peer learning, help-seeking, effort regulation, and perceived learning outcomes were tested to identify if they were mediated by intellectual capacity.

Based on the results of the bootstrapping analysis shown in Table 6, it can be observed that only help-seeking ((β3 = 0.065) obtained significant t-value of 1.747. Nevertheless, time and study environment, effort regulation and peer learning showed no mediation with perceived learning outcomes. The results of the mediation analysis are illustrated in Table 6.

Table 6: Mediator Assessment

Note: p < 0.05, t-value >1.645, BC = Bias Corrected, UL = Upper Level, LL = Lower Level, TSE: Time and Study Environment, PL: Peer Learning, HS: Help-Seeking, ER: Effort Regulation, CA: Intellectual Capacity, PLO: Perceived Learning Outcome

The findings of this study offer critical insights into the interaction between digital RMS, intellectual capacity, and perceived learning outcomes. Contrary to expectations, time and study environment management did not significantly influence students' intellectual capacity. While time investment and structured learning spaces are traditionally valued, in digital contexts, these factors alone appear insufficient. This aligns with prior findings that digital distractions often override otherwise well-managed environments, disrupting concentration and stunting cognitive engagement (Pérez-Juárez et al., 2023). These findings are also consistent with Hafizah et al. (2016), who emphasised the detrimental impact of procrastination and poor time allocation on students' cognitive development. Ghafar (2023) further supported this by identifying a strong link between poor time management and reduced academic performance.

Peer learning was the only RMS found to significantly influence intellectual capacity. This suggests that in digital learning settings, collaborative activities—when well-structured—can enhance students' cognitive development. While traditional views uphold peer learning as inherently beneficial (Harden, 2002), recent studies emphasise that its effectiveness depends largely on the quality and coordination of interactions (Shao & Kang, 2022). Inconsistent group dynamics or unmoderated peer interactions may diminish its potential, which is particularly relevant in asynchronous online environments.

Despite theoretical support for the benefits of help-seeking, this study found no direct relationship between help-seeking behaviour and intellectual capacity. Many students studying online might avoid seeking help due to perceived stigma, lack of access to timely responses, or uncertainty about available support channels (Li et al., 2023; Bimerew & Arendse, 2024). This reluctance could lead to academic struggles and maladaptive coping strategies such as guesswork or disengagement. Nonetheless, mediation analysis revealed that students with higher intellectual capacity were better able to mitigate the negative effects of limited help-seeking, using their cognitive resources to adapt and overcome academic obstacles.

Effort regulation also did not show a significant direct impact on intellectual capacity. This suggests that in digital contexts, the ability to persist through challenges is diminished by the absence of immediate support and accountability. The literature supports this interpretation—students with low self-regulatory skills are more likely to disengage when faced with difficult or ambiguous tasks (van Gog et al., 2020; Cazan, 2020). Anthonysamy (2021) also noted that students who lack resilience in online learning contexts struggle to build intellectual capacity.

The results confirm a positive relationship between intellectual capacity and perceived learning outcomes. Intellectual capacity, defined by reasoning, planning, and problem-solving abilities, emerged as a key determinant of how students evaluate their own learning. This supports findings from Shi and Qu (2022), who argue that intellectual capacity significantly enhances academic achievement. Furthermore, while Pagani et al. (2016) reported inconsistent links between these variables, this study aligns more closely with Teng and Baum (2013), who found that students' perceived learning outcomes were more influenced by their cognitive skills than by teaching quality.

These findings have significant implications for digital education design. Integrating gamified cognitive training and peer-facilitated interactions into digital platforms could improve student engagement and intellectual development. Adaptive learning environments that personalise feedback and encourage metacognitive reflection may help bridge gaps in effort regulation and help-seeking effectiveness. Furthermore, policy makers and curriculum designers should prioritise intellectual skill-building alongside strategy instruction to support holistic student development in digital learning environments.

Future research should explore the longitudinal effects of cognitive and strategic interventions, particularly those embedded within learning management systems. Investigating how digital RMS and intellectual capacity interact over time could offer deeper insights into sustainable academic success and the design of equitable digital learning environments.

Investigating how students manage learning resources and its impact on their abilities and activities holds significant promise for improving higher education. This research could inform new strategies that benefit students, educators, and universities. By understanding how intellectual capacity plays a role in resource management, we can create more effective learning environments, improve student success, and promote overall well-being. This knowledge can guide changes in teaching, curriculum design, and support services, ultimately fostering a more positive learning experience for all.

Anthonysamy, L. (2022). Motivational beliefs, an important contrivance in elevating digital literacy among university students. Heliyon, 8(12), e11913. https://doi.org/10.1016/j.heliyon.2022.e11913

Anthonysamy, L. (2021). Self-regulated learning strategies for smart learning: A case of a Malaysian university. Asian Journal of Research in Education and Social Science, 3(1), 72-83.

Anthonysamy, L., Koo, A.C., & Hew, S.H. (2020). Self-regulated learning strategies in higher education: Fostering digital literacy for sustainable lifelong learning. Education and Information Technologies, 25(4), 2393-2414. https://doi.org/10.1007/s10639-020-10201-8

Anthonysamy, L., & Singh, P. (2022). The impact of satisfaction, and autonomous learning strategies use on scholastic achievement during Covid-19 confinement in Malaysia. Heliyon, 9(2), e12198. https://doi.org/10.1016/j.heliyon.2022.e12198

Amiri Gharghani, A., Amiri Gharghani, M., & Hayat, A.A. (2019). Correlation of motivational beliefs and cognitive and metacognitive strategies with academic achievement of students of Shiraz University of Medical Sciences. Strides in Development of Medical Education, (In Press), 1-8. https://doi.org/10.5812/sdme.81169

Babakus, E., & Mangold, W. (1992). Adopting the SERVQUAL scale to hospital services: An empirical investigation. Health Services Research, 26(6), 676-686.

Bandura, A. (1986). Social foundations of thought and action: A social cognitive theory. Prentice-Hall.

Baron, R.M., & Kenny, D.A. (1986). The moderator-mediator variable distinction in social psychological research: Conceptual, strategic, and statistical considerations. Journal of Personality and Social Psychology, 51(6), 1173-1182. DOI: 10.1037//0022-3514.51.6.1173

Barrot, J.S., Llenares, I.I., & del Rosario, L.S. (2021). Students’ online learning challenges during the pandemic and how they cope with them: The case of the Philippines. Education and Information Technologies, 26, 7321-7338. https://doi.org/10.1007/s10639-021-10589-x

Bimerew, M.S., & Arendse, J.P. (2024). Academic help-seeking behaviour and barriers among college nursing students. Health SA = SA Gesondheid, 29, 2425. https://doi.org/10.4102/hsag.v29i0.2425

Biwer, F., Wiradhany, W., oude Egbrink, M., Hospers, H., Wasenitz, S., Jansen, W., & de Bruin, A. (2021). Changes and adaptations: How university students self-regulate their online learning during the COVID-19 pandemic. Frontiers in Psychology,12(642-593). doi: 10.3389/fpsyg.2021.642593

Bloom, B.S. (1956). Taxonomy of educational objectives. Handbook 1: Cognitive domain. David McKay Co.

Broadbent, J., & Poon, W.L. (2015). Self-regulated learning strategies and academic achievement in online higher education learning environments: A systematic review. The Internet and Higher Education 27, 1-13. doi: 10.1016/j.iheduc.2015. 04.007

Brown, S. (2010). Likert scale examples. ANR program evaluation: Iowa State University Extension. http://www.extension.iastate.edu/ag/staff/info/likertscaleexamples.pdf

Byrne, B.M. (2016). Structural equation modelling with AMOS: Basic concepts, applications, and programming (3rd ed.). Routledge.

Cazan, A.M. (2020). An intervention study for the development of self-regulated learning skills. Current Psychology, 41, 6406-6423(2022). https://doi.org/10.1007/s12144-020-01136-x

Carver, D.L., & Kosloski, M.J. (2015). Analysis of student perceptions of the psychosocial learning environment in online and face-to-face career and technical education courses. Quarterly Review of Distance Education, 16(4), 7-22

Chang, M.M. (2005). Applying self-regulated learning strategies in a web-based instruction—An investigation of motivation perception. Computer Assisted Language Learning, 18(3), 217-230. https://doi.org/10.1080/09588220500178939

Cohen, J. (1988). Statistical power analysis for the behavioral sciences (2nd ed.). Lawrence Erlbaum Associates, Publishers.

Effeney, G., Carroll, A., & Bahr, N. (2013). Self-regulated learning: Key strategies and their sources in a sample of adolescent males. Australian Journal of Educational & Developmental Psychology, 13, 58-74.

Eom, S.B., Wen, H.J., & Ashill, N. (2006). The determinants of students’ perceived learning outcomes and satisfaction in university online education: An empirical investigation*. Decision Sciences Journal of Innovative Education, 4(2), 215-235. https://doi.org/10.1111/j.1540-4609.2006.00114.

Ghafar, Z.N. (2023). The relevance of time management in academic achievement: A critical review of the literature. International Journal of Applied and Scientific Research, 1(4), 347-358. https://doi.org/10.59890/ijasr.v1i4.1008

Gold, A., Malhotra, A., & Segars, A. (2001). Knowledge management: An organizational capabilities perspective. Journal of Management Information Systems, 18, 185-214.

Gottfredson, L.S. (1997). Why g matters: The complexity of everyday life. Intelligence, 24(1), 79-132. https://doi.org/10.1016/S0160-2896(97)90014-3

Greene, J.A., Yu, S.B., & Copeland, D.Z. (2014). Measuring critical components of digital literacy and their relationships with learning. Computers and Education, 76, 55-69. https://doi.org/10.1016/j.compedu.2014.03.008

Hafizah, H., Norhana, A., Badariah, B., & Noorfazila, K. (2016). Self-regulated learning in UKM. Pertanika Journal of Social Sciences and Humanities, 24, 77-86.

Hair, J.F., Hult, G.T., Ringle, C.M., & Sarstedt, M. (2017). A primer on partial least squares structural equation modelling (PLS-SEM). Sage.

Hair, J.F., Risher, J.J., Sarstedt, M., & Ringle, C.M. (2019). When to use and how to report the results of PLS-SEM. European Business Review, 31(1), 2-24. https://doi.org/10.1108/EBR-11-2018-0203

Harden, R.M. (2002). Learning outcomes and instructional objectives: Is there a difference? Medical Teacher, 24(2), 151-155

Hargreaves, J., Ketnor, C., Marshall, E., & Russell, S. (2022): Peer-assisted learning in a pandemic. International Journal of Mathematical Education in Science and Technology, 53(3). DOI: 10.1080/0020739X.2021.2008036

Kline, R.B. (2011). Convergence of structural equation modelling and multilevel modelling. In M. Williams & W.P. Vogt (Eds.), Handbook of methodological innovation in social research methods, 562-589. Sage.

Krishnan, S.D., & Hassan, N.C. (2021). Online peer learning amid Covid-19 pandemic in Malaysian higher learning institution. Turkish Journal of Computer and Mathematics Education, 12(14), 1010-1019.

Li, R., Che Hassan, N., & Saharuddin, N. (2023). College student's academic help-seeking behavior: A systematic literature review. Behavioral Sciences (Basel, Switzerland), 13(8), 637. https://doi.org/10.3390/bs13080637

Loeffler, S.N., Bohner, A., Stumpp, J., Limberger, M.F., & Gidion, G. (2019). Investigating and fostering self-regulated learning in higher education using interactive ambulatory assessment. Learning and Individual Differences 71, 43-57. doi: 10.1016/j.lindif.2019.03.006

Moser, C., & Kalton, G. (1972). Survey methods in social investigation. Heinemann.

National Center for Higher Education Management Systems (NCEMS) (1994). A preliminary study of the feasibility and utility for a national policy of instructional and “good practice” indicators in undergraduate education. Contractor Report for the National Center for Education Statistics. National Center for Higher Education Management Systems. https://files.eric.ed.gov/fulltext/ED372718.pdf

Naujoks, N., Bedenlier, S., Gläser-Zikuda, M., Kammerl, R., Kopp, B., Ziegler, A., & Händel, M. (2021). Self-regulated resource management in emergency remote higher education: Status quo predictors. Frontiers in Psychology, 12(672-741). doi: 10.3389/fpsyg.2021.672741

Ng, W. (2012). Can we teach digital natives digital literacy? Computers & Education, 59, 1065-1078.

Ogan, A., Walker, E., Baker, R., Rodrigo, M.M.T., Soriano, J.C., & Castro, M. J. (2015). Towards understanding how to assess help-seeking behavior across cultures. International Journal of Artificial Intelligence in Education, 25(2), 229-248. https://doi.org/10.1007/s40593-014-0034-8

Pérez-Juárez, M.Á., González-Ortega, D., & Aguiar-Pérez, J.M. (2023). Digital distractions from the point of view of higher education students. Sustainability, 15(7), 6044. https://doi.org/10.3390/su15076044

Pagani, L., Argentin, G., Gui, M., & Stanca, L. (2016). The impact of digital skills on educational outcomes: Evidence from performance tests. University of Milan Bicocca Department of Economics, Management and Statistics Working Paper No. 304. SSRN: https://ssrn.com/abstract=2635471or http://dx.doi.org/10.2139/ssrn.2635471

Paulhus, D.L. (1991). Measurement and control of response bias. In J.P. Robinson, P.R. Shaver, & L.S. Wrightsman (Eds.), Measures of personality and social psychological attitudes (pp.17-59). Academic Press.

Pintrich, P.R., & Garcia. T. (1991). Student goal orientation and self-regulation in the college classroom. In M. Maehr & P.R. Pintrich (Eds.), Advances in motivation and achievement: Goals and self-regulatory processes (pp. 371-402). JAI Press.

Pintrich, P.R., Smith, D.F., Garcia, T., & McKeachie, W. (1991). A manual for the use of the Motivated Strategies for Learning Questionnaire (MSLQ). http://files. eric.ed.gov/fulltext/ED338122.pdf

Preacher, K.J., & Hayes, A.F. (2008). Asymptotic and resampling strategies for assessing and comparing indirect effects in multiple mediator models. Behavior Research Methods, 40, 879-891.

Ramayah, T., Cheah, J-H., Chuah, F., Ting, H., & Memon, M.A. (2018). Partial Least Squares Structural Equation Modeling (PLS-SEM) using SmartPLS 3.0: An updated and practical guide to statistical analysis. Pearson.

Rasheed, R.A., Kamsin, A., & Abdullah, N.A. (2020). Challenges in the online component of blended learning: A systematic review. Computers in Education, 144,103701. doi: 10.1016/j.compedu.2019.103701

Richardson, M., Abraham, C., & Bond, R. (2012). Psychological correlates of university students' academic performance. A systematic review and meta-analysis. Psychological Bulletin, 138, 353-387

Rovai, A.P., Wighting, M.J., Baker, J.D., & Grooms, L.D. (2009). Development of an instrument to measure perceived cognitive, affective, and psychomotor learning in traditional and virtual classroom higher education settings. Internet and Higher Education, 12(1), 7-13. https://doi.org/10.1016/j.iheduc.2008.10.002

Shao, Y., & Kang, S. (2022). The association between peer relationship and learning engagement among adolescents: The chain mediating roles of self-efficacy and academic resilience. Frontiers in Psychology, 13, 938756. https://doi.org/10.3389/fpsyg.2022.938756

Sheng, L.R (2021, January 31). Mental health matters. The Star. Started, p. 1.

Shi, Y., & Qu, S. (2022). The effect of cognitive ability on academic achievement: The mediating role of self-discipline and the moderating role of planning. Frontiers in Psychology, 13, 1014655. https://doi.org/10.3389/fpsyg.2022.1014655

Spanjers, I., Könings, K.D., Leppink, J., Verstegen, D., Jong, N., Czabanowska, K., & Merriënboer, J. (2015). The promised land of blended learning: Quizzes as a moderator. Educational Research Review, 15, 59-74.

Teng, C.J.H., & Baum, T. (2013). Academic perceptions of quality and quality assurance in undergraduate hospitality, tourism and leisure programmes: A comparison of UK and Taiwanese programmes. Journal of Hospitality, Leisure, Sport & Tourism Education, 13, pp. 233-243

Usher, E.L., & Schunk, D.H. (2018). Social cognitive theoretical perspective of self-regulation. In Handbook of self-regulation of learning and performance (2nd ed.). Taylor & Francis Group, 19-35. doi: 10.4324/9781315697048-2

van Gog, T., Hoogerheide, V., & van Harsel, M. (2020). The role of mental effort in fostering self-regulated learning with problem-solving tasks. Educational Psychology Review, 32, 1055-1072. https://doi.org/10.1007/s10648-020-09544-y

van Laar, E., van Deursen, A.J.A.M., van Dijk, J.A.G.M., & de Haan, J. (2017). The relation between 21st-century skills and digital skills: A systematic literature review. Computers in Human Behavior, 72, 577-588. https://doi.org/10.1016/j.chb.2017.03.010

Whiting, M.J. (2011). Measuring sense of community and perceived learning among alternative licensure candidates. Journal of the National Association for Alternative Certification, 6(1), 4-12.

Zhao, H., Chen, L., & Panda, S. (2013). Self-regulated learning ability of Chinese distance learners. British Journal of Educational Technology, 45(5), 941-958.

Zimmerman, B.J., & Martinez-Pons, M. (1986). Development of a structured interview for assessing student use of self-regulated learning strategies. American Educational Research Journal, 23(4), 614-628.

Author Notes

Lilian Anthonysamy is an Associate Professor at the Faculty of Management, Multimedia University, Malaysia. Dr. Anthonysamy holds the title of Professional Technologist and is accredited by the Malaysian Board of Technologies (MBOT), which earned her the designation TS (Technology Specialist). Her research includes technology adoption and management, digital transformation and change management, learning sciences, health and wellbeing, educational technology, cognitive science, and user experience. Email: lilian.anthonysamy@mmu.edu.my (https://orcid.org/0000-0003-1241-326X)

Victor Alasa is an experienced educator with over 20 years in higher education, specialising in special and inclusive education in Africa and the Pacific. He is a Lecturer at the University of the South Pacific, holding a PhD in Education (Special and Inclusive Education). His expertise includes program development and is evident in numerous publications. Email: victor.alasa@fnu.ac.fj (https://orcid.org/0000-0001-9518-3652)

Sofia Ali academic journey reflects a strong commitment to lifelong learning. She earned her PhD from Mangalore University, India, along with qualifications from the University of the South Pacific. Dr. Ali teaches postgraduate courses, supervises Master’s and Doctoral research, and actively mentors early-career researchers across the Pacific. She has represented Fiji (FNU) at major international forums, including OCIES 2023 in Samoa and OCIES 2024 in Australia. Her research interests include ICT integration, primary mathematics, curriculum reform, rural education, women in leadership, and the role of education in advancing the Sustainable Development Goals (SDGs). She is also engaged in issues of climate change education and small island states development. Dr. Ali collaborates on research and development projects with colleagues in Australia, New Zealand, Samoa, and India, and is an active member of WCCES. Email: sofia.ali@fnu.ac.fj (https://orcid.org/0000-0003-2332-7877)

Cite as: Anthonysamy, L., Alasa, V., & Ali, S. (2025). Digital resource management and student learning outcomes in higher education: The mediating role of intellectual capacity. Journal of Learning for Development, 12(2), 259-274.