Ismiyati Ismiyati, Suranto Aw, Haryanto Haryanto, Farida Agus Setiawati, Yulia Ayriza and Mar'atus Sholikah

2026 VOL. 13, No. 1

Abstract: Self-regulated online learning (SOL) is the self-regulation of students who study independently in an online learning environment. Jansen et al. (2017) show that self-regulated online learning (SOL) has five dimensions or factors: 1) metacognitive skills, 2) time management, 3) environmental structuring, 4) persistence, and 5) help-seeking. SOL is a new instrument that measures students’ self-regulation in online learning. However, this instrument has yet to be adapted to Indonesian culture, thus requiring an adaptation process to be undertaken. This study aimed to determine the validation of SOL in an Indonesian context. The adaptation process was carried out following the guidelines of Beaton et al. (2000). Data were collected from 780 students in Vocational High Schools (VHS), majoring in the Automation and Office Management Programme (AOMP) in Central Java, Indonesia, and selected by incidental sampling. Data analysis relied on Exploratory Factor Analysis (EFA) and Confirmatory Factor Analysis (CFA), assisted by SPSS 23.0 and LISREL 8.80. The results show that the construct of the SOL adaptation instrument needs to be validated and more reliable. Thus, this instrument does not meet the principle of convergent validity.

Keywords: self-regulated online learning, online learning, confirmatory factor analysis, exploratory factor analysis

Learning in online environments has become a trend in the last decade. Online learning provides several benefits, including flexibility in terms of time and location. Online learning presents challenges for both teachers and students as they adapt to a physically separated environment. Students are encouraged to learn independently and take responsibility for self-management in the learning process, including managing their time for studying and having awareness in themselves to carry out assignments. The ability to utilise various learning resources is one of the requirements for students.

Many studies have been conducted to test the effectiveness of online learning in improving student achievement. However, there is still room for improvement in analysing the effects of psychological and technical changes on student learning. Even though online learning is not something new, in Indonesia, online learning only became widely known during the extraordinary Covid-19 pandemic. After this remarkable event, the majority of students in Indonesia, especially at the secondary level, became familiar with online learning or hybrid learning, which combines online and face-to-face learning. Traditional learning offers better psychological conditions and understanding before switching to online learning (Yazid & Neviyarni, 2021). In modern times, the internet has become the most preferred and enjoyable medium of learning, surpassing other forms of learning media (Manurung et al., 2021).

It cannot be denied, however, that online learning offers more convenience in the learning process, separated by space. Online learning can be a challenging experience for many students. It requires not only access to the necessary technological facilities but also a certain level of psychological preparedness. The ability to stay motivated, manage time effectively, and maintain focus is crucial for success in online learning. The students who possess these skills are more likely to excel in this mode of education. Previously, online learning was an option only when school conditions did not allow face-to-face learning; for example, natural conditions were unsuitable. Online learning is an alternative that allows learning activities to continue. Online learning can be carried out with the help of internet networking media, including Zoom meetings, Google Meet, Microsoft Team Meeting, Google Class, and WhatsApp groups.

Students must have proficient learning independence because the key to successful online learning depends on both students and teachers. Online learning requires student learning independence in the learning process (Mufidah & Surjanti, 2021), and one of the key skills required for successful online learning is the ability to independently regulate one's own learning activities (Khoerunnisa et al., 2021). The online learning environment requires students to apply self-regulation, making it critical to study student psychology in order to gain insight into their ability to do so. Meanwhile, the accuracy of measuring instruments is vital so that they can represent the actual situation. In this study, we propose and validate a self-regulation instrument for online learning. We adapted an existing instrument that has been established through rigorous development across various sample populations to ensure its validity and reliability within the Indonesian context. Additionally, there is a need for more instruments relating to self-regulation in online learning in Indonesia. Our research focused on vocational school students in a trial area in Central Java, Indonesia.

Learning achievement is one of the benchmarks for student learning success (Azhar, 2015). Empirically, even though students' abilities may be high, they cannot achieve optimal learning if they fail to self-regulate in learning activities. This statement becomes a reference for the importance of self-regulated learning for each student. Self-regulated learning is the ability for students to regulate their self to be active in metacognition, motivation, and attitude (behaviour) in learning (Zimmerman, 1989). Self-regulation also emphasises the importance of personal responsibility and the control of the knowledge and skills acquired by students, resulting in a positive and significant relationship to student achievement through the use of self-regulation strategies, especially in the context of online learning. (Zimmerman & Martínez-Pons, 2012).

Previous research has also supported the idea that self-regulated learning is one of the factors consistently having an impact on student academic achievement (Broadbent & Poon, 2015; Cho et al., 2017; Cho & Shen, 2013; Juaninda et al., 2020; Wong et al., 2018). The results of Maldonado-Mahauad et al. (2018) also support that individuals who self-regulate their learning tend to achieve higher academic results than individuals who cannot regulate their learning behaviour. For this reason, self-regulated learning is essential to success in online learning (Littlejohn et al., 2016).

Self-regulated learning skills are critical in online learning environments because students must plan, manage, and control their learning activities to complete the learning process successfully (Wang et al., 2013). Self-regulated learning can, therefore, help achieve learning objectives (Kizilcec et al., 2017). Students with high levels of self-regulation tend to take advantage of a more flexible approach to managing their learning process (Littlejohn et al., 2016). Students with high self-regulation spend more time watching video lectures and taking regular tests, and they are also more likely to return to learning the subject matter (Kizilcec et al., 2017).

The primary objective of this study was to study the importance of self-regulated learning and its relationship with student achievement in an online learning environment. Therefore, an instrument was needed to measure self-regulated learning in an online learning environment. The Self-Regulated Online Learning Questionnaire is widely used to assess independent learning skills online (Barnard et al., 2009; Vilkova & Shcheglova, 2021). This instrument has been widely adapted for use in Turkish, Romanian, Russian, and Chinese contexts (Cazan, 2014; Chorng et al., 2019; Korkmaz & Kaya, 2012; Martinez-Lopez et al., 2017). Notably, despite extensive research into the matter, there remains a pressing need to provide evidence of the effectiveness of the Self-Regulated Online Learning Questionnaire.

This study adopts the Self-Regulated Online Learning (SOL) instrument developed by Jansen et al.. The instrument was developed by combining questionnaire items from the Motivated Strategies for Learning Questionnaire (MSLQ), Online Self-Regulated Learning Questionnaire (OSLQ), Metacognitive Awareness Inventory (MAI), and Learning Strategies Questionnaire (LS) (Vilkova & Shcheglova, 2021). SOL instruments have been applied in parts of the West, such as Europe and the United States. In Indonesia, research within the context of validating the adaptation of the SOL measuring instrument has yet to be explicitly undertaken. Therefore, it is vital to conduct research focusing on the adaptation and validation of the self-regulated online learning instrument developed by Jansen et al. (2017) in the Indonesian context. Thus, this study aimed to understand independent learning skills in an online learning environment utilising Self-Regulated Online Learning.

The objectives of this study were to:

This study employed a quantitative survey design to adapt and validate the Self-Regulated Online Learning (SOL) instrument in the Indonesian context. Data analysis involved Exploratory Factor Analysis (EFA; n = 430) and Confirmatory Factor Analysis (CFA; n = 350) using SPSS 23.0 and LISREL 8.80 to examine factor structure, construct validity, and reliability.

The subjects of this study were Grade 11 students majoring in the Automation and Office Management Programme (AOMP). The samples were collected from 14 different Vocational High Schools (VHS) located across the Central Java region, Indonesia. From the total population of students in these schools, 780 respondents participated in the study, consisting of 36 male students and 744 female students.

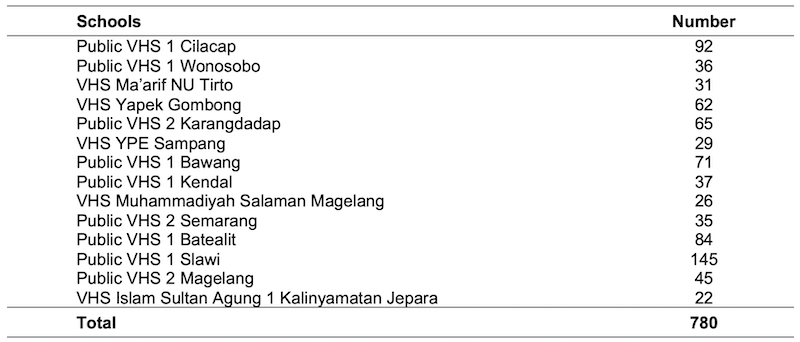

The sampling process employed an incidental sampling technique (a type of non-probability sampling), where data were gathered from students who were accessible and willing to participate during the data collection period. Data collection was conducted online using Google Forms in December 2021. Detailed information regarding the distribution of respondents across the 14 participating schools is presented in Table 1.

Table 1: Data on Research Respondents

The study's instrument was adapted from Jansen et al.'s 2017 study on Self-Regulated Online Learning (SOL). SOL consists of 36 items representing five dimensions: metacognitive skills, time management, environmental structuring, persistence, and help-seeking.

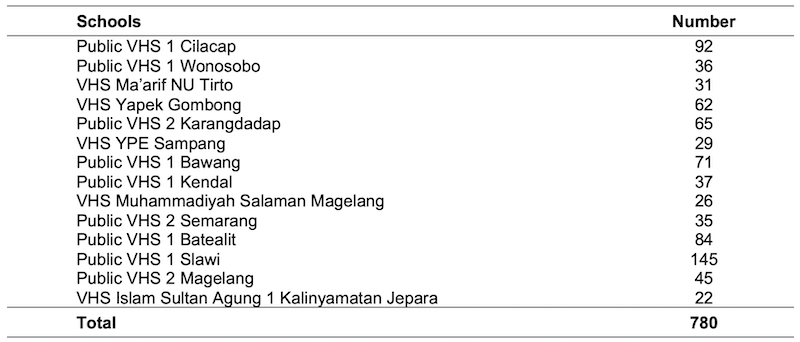

Instrument adaptation is a term that describes the process of translating an instrument from the original language to the target language. In its development, instrument adaptation has become more popular using the term cross-cultural adaptation. Cross-cultural adaptation includes the process of language transfer and cultural adaptation issues in preparing instruments by adjusting where the instrument will be used (Beaton et al., 2000). The flow of instrument adaptation is presented in Figure 1.

Stage 1 involved correspondence via email with Jansen, the original developer of the Self-Regulated Online Learning (SOL) instrument, to obtain formal permission to adapt the instrument. The researchers obtained permission on October 14, 2021 to adapt the SOL. The initial translation from the original to the target language was completed at this stage. Translator 1 was NS, an English Lecturer at Tadulako University. He served as a person who understood the concept of a questionnaire and could provide equivalence from the perspective of the measured construct. Translator 2 was SA, an Economics Lecturer at Universitas Negeri Semarang. Translator 2 was tasked with detecting differences in meaning from the original questionnaire with Translator 1.

Stage 2 synthesised the results of the translation from the previous stage. Researchers conducted the synthesis stage to observe possible discrepancies between the two translation results. At the end of the synthesis process, statement items were produced and carried out in the back translation process at Stage 3.

Stage 3 was back translation, translating the synthesis results into the original language. This process ensured that the translated version represented the same exact item content as the original version.

Stage 4 was the expert committee, which combined all the versions compiled to develop a pre-final version for field testing. The expert committee consisted of a methodologist (UF), a linguist professional (NS), and a translator expert (SA). The researcher compiled the instrument before distributing it to participants who met the criteria. Items were answered on a 7-point Likert scale, ranging from "very uncharacteristic of me" (= 1) to "very characteristic of me" (= 7).

Stage 5 involved testing subjects with the same characteristics as the population to be studied. At this stage, the researcher analysed the data that was collected using Exploratory Factor Analysis (EFA) and Confirmatory Factor Analysis (CFA). The data was collected from 780 respondents, N = 430 with EFA and N = 350 with CFA. The sample division into two groups in the EFA and CFA analysis aimed to avoid false discoveries (Anderson et al., 2017; Kumalasari et al., 2020).

The first step in SOL validation was to perform an EFA analysis using SPSS Version 23.0. However, this research adapted the SOL measuring instrument, which already had many factors (Jansen et al., 2017). Costello and Osborne (2005), and Kumalasari et al. (2020) still recommend performing EFA on adaptation measuring tools to provide more convincing empirical evidence regarding the number of these construct factors in different samples.

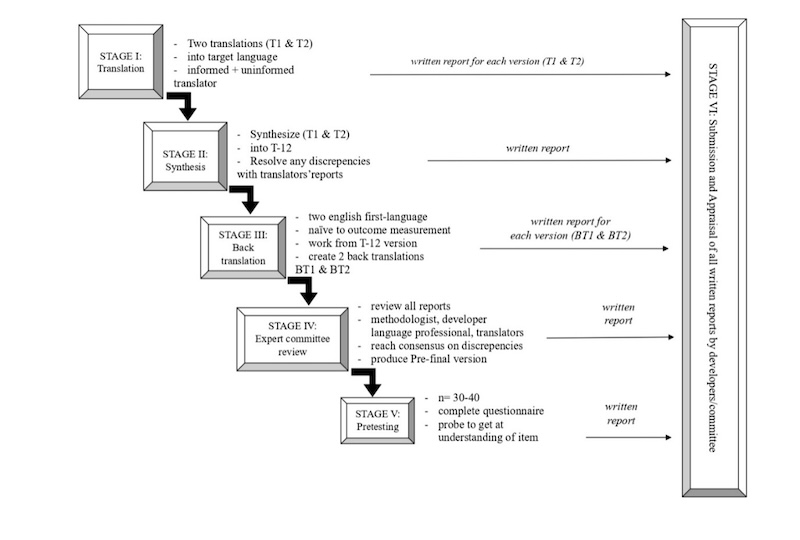

Table 2 shows the results of Total Variance Explained, which explains the factor structure formed by adjusting the previous research article, namely, five factors using SPSS 23.0 data processing results. The total variance that the EFA model can explain is 53.183. The first factor (metacognitive skills) was 22.730; the second factor (time management) was 9.338; the third factor (environmental structuring), 8.951; the fourth factor (persistence), 7.827; and the fifth factor (help-seeking), 4.336.

Table 2: Result of Total Variance Explained

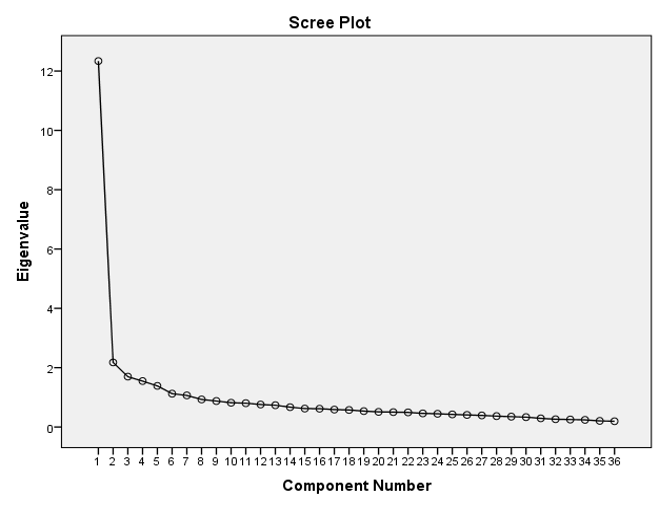

The number of factors formed can also be seen from the scree plot image. The number of points above the eigenvalue one equated with the factors specified in the previous research article was five. It shows that there were five groupings of factors. More details can be seen in Figure 2.

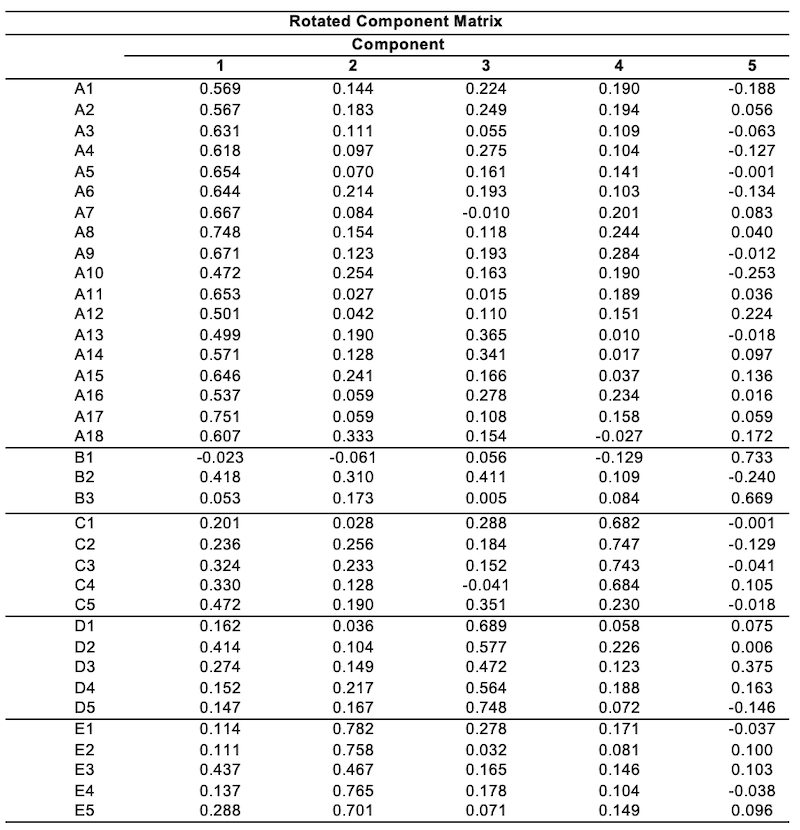

Rotation is necessary to find out which items are included in each factor. The rotation uses the varimax method with Kaiser Normalisation through rotation converged in six iterations. A factor is said to have a significant factor loading if the value is above 0.3 ((Field, 2013; Kumalasari et al., 2020). Table 3 presents the results of the Rotated Component Matrix, which places each item in its component factor. Empirically, however, it has been found in item B2 (I make sure that I keep up with the weekly readings and assignments for this online learning) with the loading factor (0.418) from factor 2 (time management) shifting to factor 1 (metacognitive skills). It also happened on item C5 (I know what the instructor expects me to learn in this online learning) with a loading factor (0.472) from factor 3 (Environmental structuring) shifting to factor 1 (metacognitive skills).

Table 3: EFA Results on Self-Regulated Online Learning

Extraction Method: Principal Component Analysis.

Rotation Method: Varimax with Kaiser Normalisation.

a. Rotation converged in six iterations.

According to the findings of the exploratory factor analysis (EFA), the adaptation tool designed for Self-Regulated Online Learning in archival learning for the Automation and Office Management Programme (AOMP) students has been deemed valid after undergoing the EFA process. The questionnaire used in the study consisted of 36 items, which were empirically shown to have a loading factor greater than 0.3. Therefore, it can be concluded that the adaptation tool is a reliable means of supporting self-regulated online learning in the AOMP programme.

Based on the grouping of items with EFA, further proof of the instrument's construct validity was carried out, using CFA assisted by the LISREL 8.80 programme. CFA was used to test the dimensionality of a construct by testing validity and reliability, which is one way of understanding the capability of research instruments (also known as questionnaire question items) to measure precisely, to see the validity and reliability of variable, indicator, and item questions (Sujarweni, 2018).

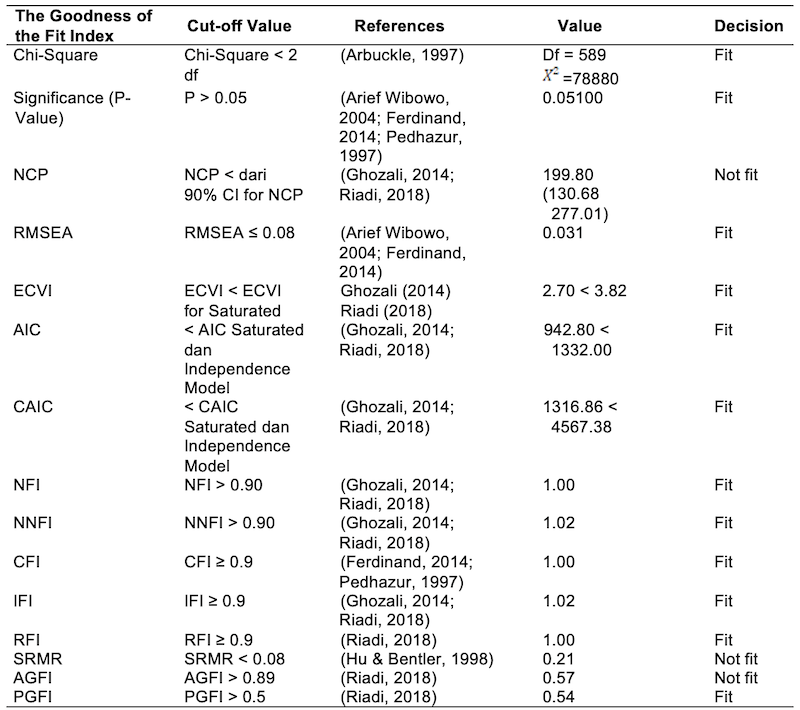

The overall test results in Table 4, based on five model fit criteria, show that all model fit criteria were met. Therefore, it can be concluded that the overall model test shows a good fit (Goodness of Fit).

Table 4: CFA Results on Self-Regulated Online Learning

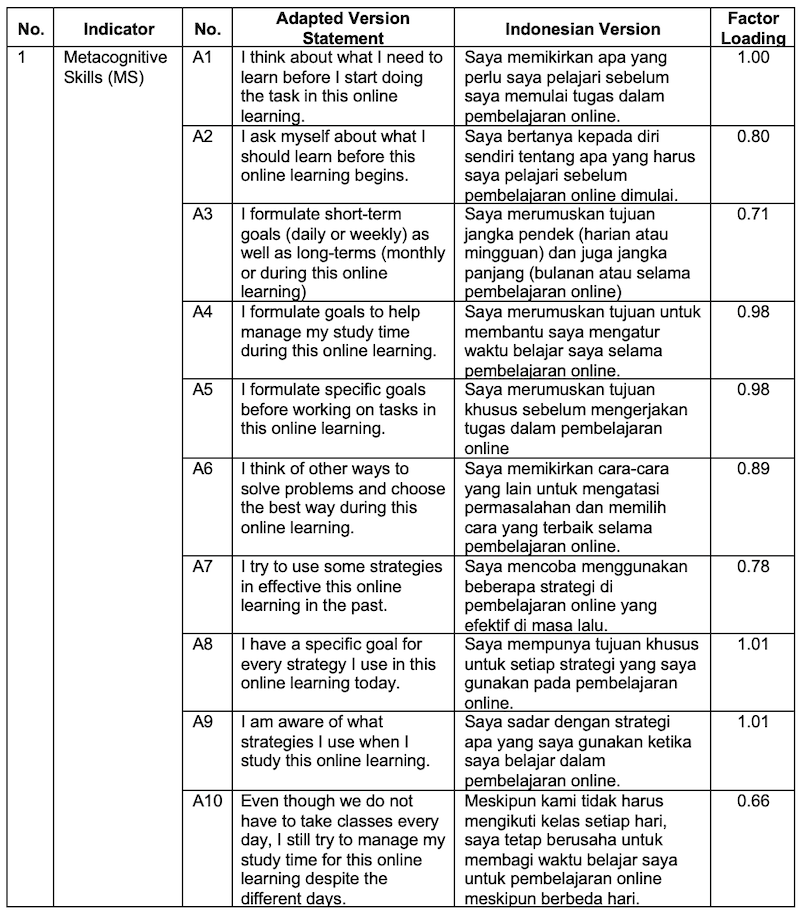

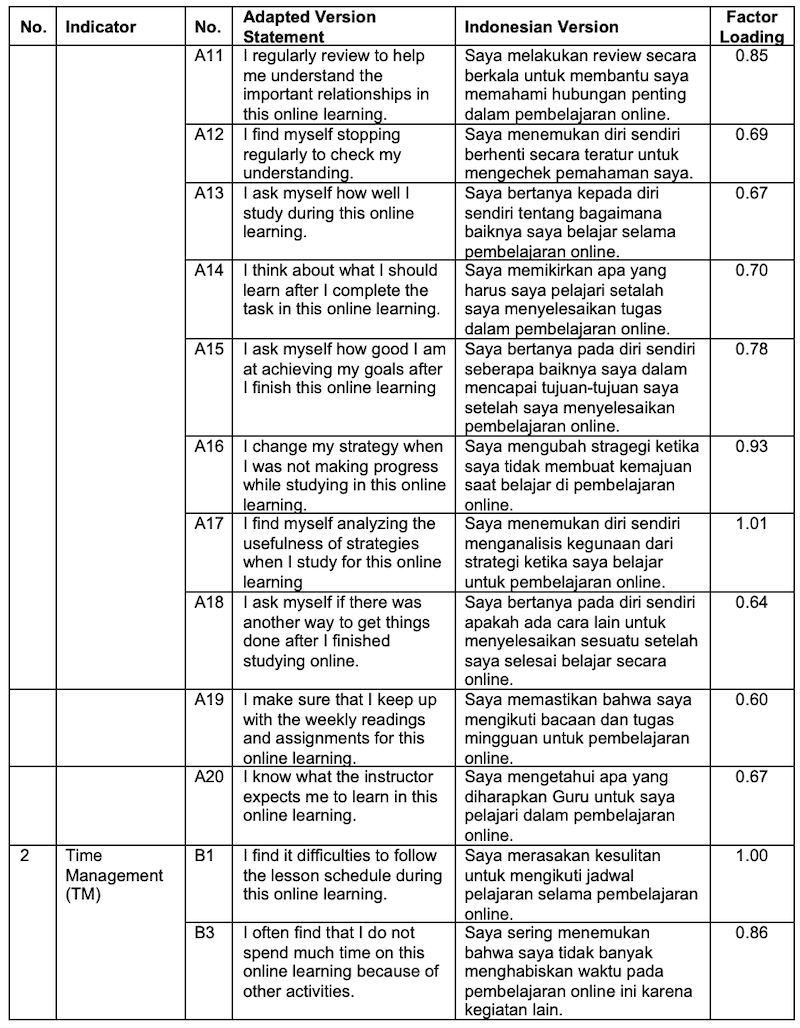

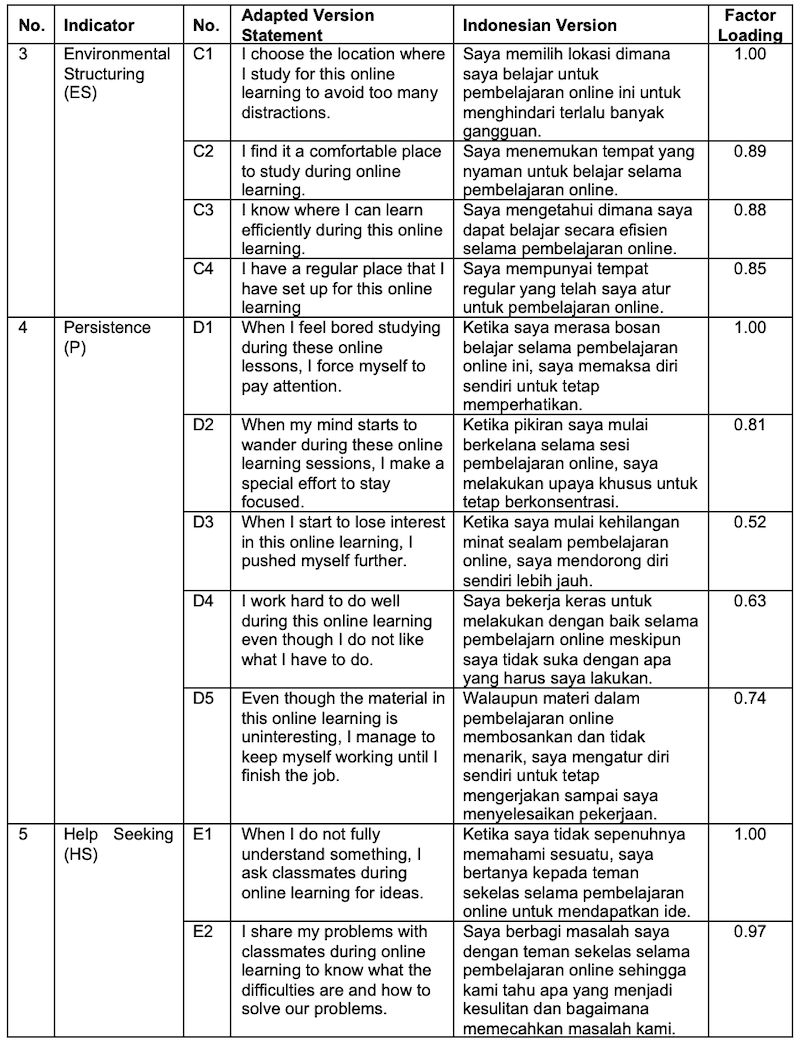

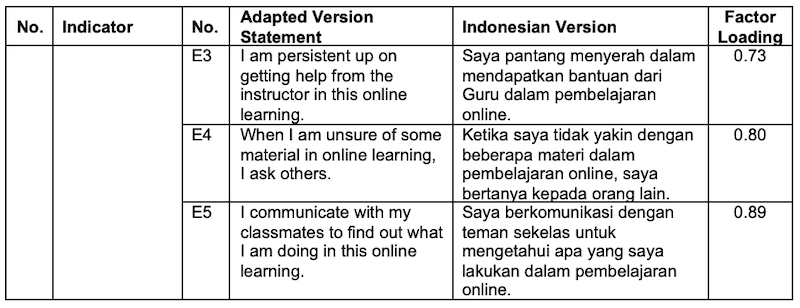

The questionnaire for Self-Regulated Online Learning, in both its original and adapted versions, consisted of five indicators and 36 statements. These five indicators were Metacognitive Skills (MS), Time Management (TM), Environmental Structuring (ES), Persistence (P), and Help Seeking (HS). Following the Confirmatory Factor Analysis (CFA), there were shifts in the number of items for the Metacognitive Skills (MS), Time Management (TM), and Environmental Structuring (ES) indicators compared to the original version. Specifically, the Metacognitive Skills (MS) indicator increased from 18 items to 20 items. Conversely, the Time Management (TM) indicator decreased from three items to two items, as item B2 from the original version was reclassified as A19. Similarly, the Environmental Structuring (ES) indicator changed from five items to four items, with item C5 from the original version being reclassified as A20.

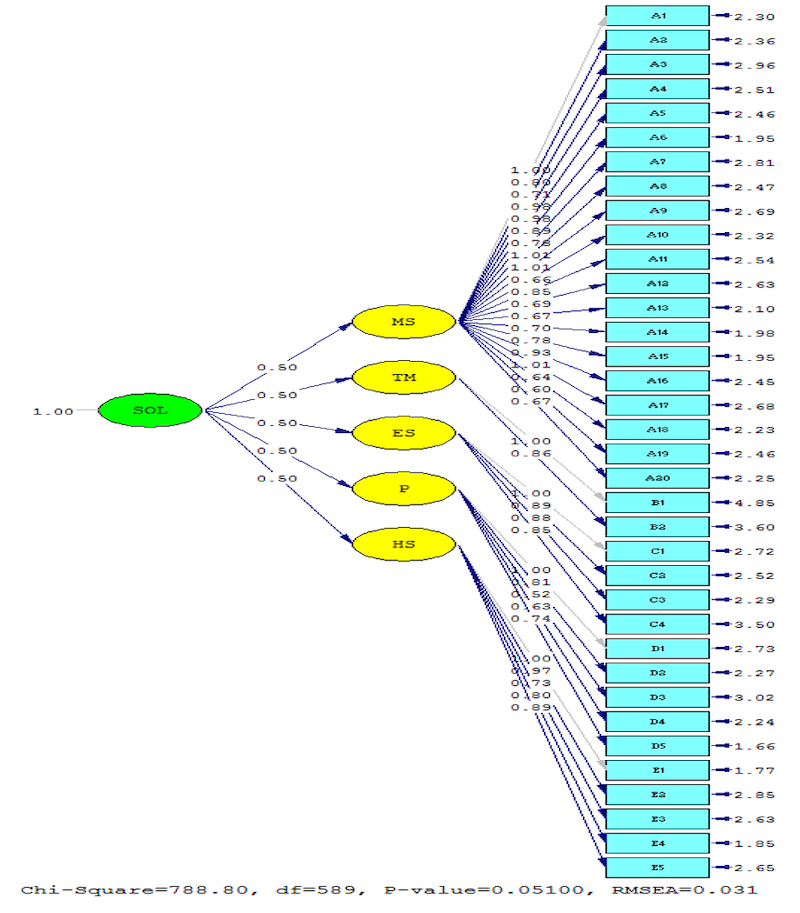

next consideration was whether the assessment of model fit was complex and this required close attention (Ghozali, 2014). An index indicating that the model is fit does not guarantee that the model is genuinely fit. On the other hand, a fit index that concludes the model is unsuitable does not guarantee that the model is genuinely unfit. Through structural equation modeling (SEM) data processing, researchers cannot rely on one or several fit indices. Nevertheless, it is best to consider the entire fit index. The Goodness of Fit statistics output results can be seen in Figure 3.

Table 5 shows that the Goodness of Fit estimation results are generally in the fit category; only three indices were not fit, so, overall, it can be concluded that the model was reasonably fit. The sample covariance matrix was relatively the same as the estimated covariance matrix (Riadi, 2018).

Table 5: The Goodness of Fit Testing Criteria

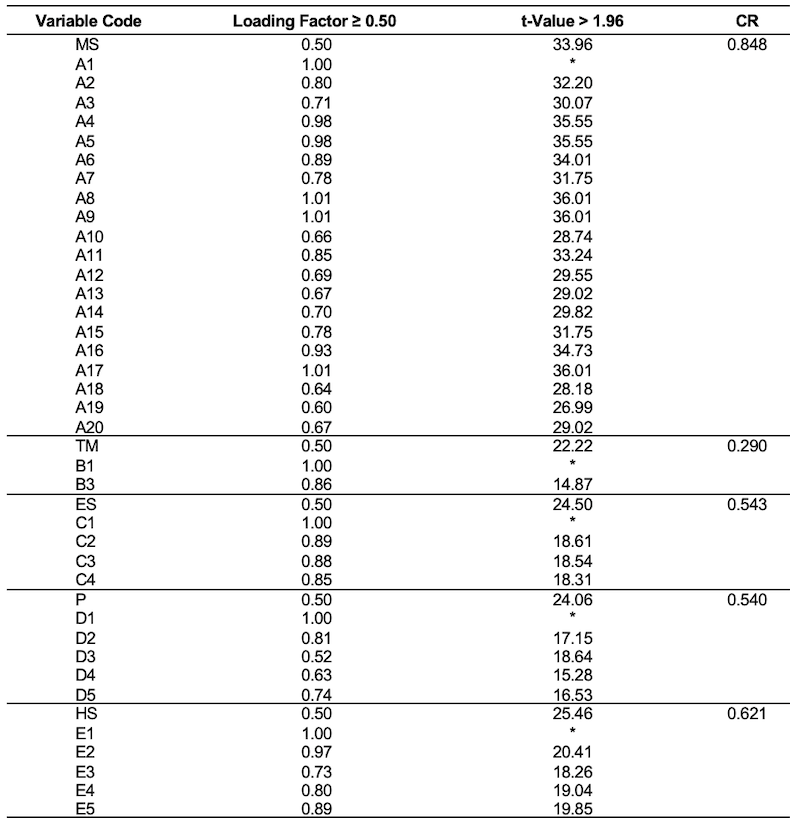

The model fit test (Goodness of Fit) with CFA, convergent validity, and construct reliability was evidenced. One approach to testing construct validity was factor analysis (Azwar, 2017). Sujarweni (2018) used the validity test to assess whether a set of measuring instruments is appropriate for measuring what should be measured. Table 5 shows that five (5) latent variables (factors) and thirty-six (36) observation variables (items) had a standardised loading factor value of 0.50 (Hair et al., 2014) with a T-value > 1.96 (Sujarweni, 2018). This demonstrated that each observed variable (item) was significant in measuring the latent variable. Overall, thirty-six observed variables were valid items measuring the construct of Self-Regulated Online Learning.

In addition to the validity test, a reliability test was also conducted. Sujarweni (2018) stated that the reliability test showed the extent to which a measuring instrument can provide relatively the same results if repeated measurements are made on the same object. High construct reliability indicated that internal consistency existed at CR 0.70, meaning the measures all consistently represented the same latent construct (Hair et al., 2014).

Table 6 shows the results of construct reliability. Among the five factors of Self-Regulated Online Learning, only the metacognitive skills factor demonstrated high reliability, with a reliability coefficient of 0.848, indicating that it is reliable. In contrast, the other four factors showed reliability values below 0.70 and were therefore considered unreliable: time management (0.290), environmental structuring (0.543), persistence (0.540), and help-seeking (0.621). According to Nunnally (1970), a reliability coefficient above 0.70 indicates good reliability (Greco et al., 2018). Thus, only the metacognitive skills factor met the recommended reliability threshold, while the remaining factors would require improvement to achieve adequate reliability.

Table 6: Calculation of Validity and Reliability

In practice, the items in a measuring instrument must be valid first and then tested for reliability. As such, valid items are only sometimes reliable. Reliable items can be ascertained as valid (Sujarweni, 2018). It happened in the research on adaptation of Self-Regulated Online Learning instruments, where all items were declared valid. However, only one was reliable for construct reliability, while four were unreliable. Thompson and Vacha-Haase (2000) suggest that other factors will also affect the reliability estimates. Five of these are now discussed: test length, speed, group homogeneity, the difficulty of items, and objectivity. Strengthening the previous statement using another source, according to Fernandes (1984, p. 88), the factors affecting reliability are the length of tests, the spread of scores, and the difficulty of test items. Arikunto (2010) explains that the high or low values of item reliability is influenced by the following:

The instructions for filling out the questionnaire were included in writing in the Google Form. There has yet to be supervision for filling out this questionnaire. The questionnaire was distributed online and was addressed directly to each student through the approval of the archiving subject teacher. As a result, this was based only on the flexibility of students' time in filling out questionnaires and the varying conditions of place. Ismiyati et al. (2021) suggest that the low reliability of items in the test might be due to the students’ current school environment, including ongoing renovation work and a moving class system, for example, in one day there were three examinations held in three different rooms. Moving from one room to another between examinations may have contributed to the low reliability. It is essential, therefore, to do an EFA and CFA analysis when adapting an instrument. Moreover, these instruments are from different backgrounds and cultures. It would be helpful to have a representative sample for both the EFA and CFA analyses. In addition to this, in choosing the instrument to be adapted, constructs should have factors or dimensions that do not vary much in the number of items presented, meaning there should be a manageable amount between one factor and another within each construct.

This study aimed to validate the adaptation of the Self-Regulated Online Learning (SOL) instrument in the Indonesian context. Based on EFA and CFA results, the instrument retained a five-factor structure consistent with Jansen et al. (2017), with minor item shifts from the time management and environmental structuring factors to the metacognitive skills factor. The CFA indicated that the overall model fit was acceptable. All 36 items were valid, however, only the metacognitive skills factor demonstrated adequate construct reliability (CR = 0.848), while the other factors were below the recommended threshold (i.e., CR < 0.70). This shows that although the items were valid, the instrument’s construct reliability needs improvement, and not all factors fully satisfied the principle of convergent validity.

These findings were consistent with other SRL scale adaptation studies (e.g., Kumalasari et al., 2020; Jansen et al., 2017), which report that some factors, especially those with fewer items, might have lower reliability when instruments are adapted cross-culturally. This highlights the challenge of transferring SRL instruments developed in the West to the Indonesian educational context.

Future research should focus on improving the reliability of less consistent factors, for example by increasing the number of items per factor or refining item wording to better reflect the construct in Indonesian culture. Additionally, further studies could explore the theoretical and practical implications of SOL in online learning, particularly the role of metacognitive skills in enhancing self-regulated learning outcomes. Strengthening instrument validity and reliability is essential to ensure accurate measurement and effective implementation of SOL in diverse educational contexts.

Ethics Approval: Formal ethics approval was not required by the institution for this type of research, as it involved minimal risk. However, all research procedures were conducted in accordance with the ethical standards of the institutional research committee and the 1964 Helsinki Declaration. Informed consent was obtained from all participants, and their anonymity and confidentiality were strictly maintained throughout the research process.

Anderson, M.L., Magruder, J., Casey, K., Miguel, T., Mullainathan, S., & Olken, B. (2017). Split-sample strategies for avoiding false discoveries. NBER Working Paper No. W23544. https://ssrn.com/abstract=2996305

Arbuckle, J. (1997). AMOS users’ guide: Version 3.6. Small Waters Corporation.

Arief Wibowo, S. (2004). Materi pelatihan structural equation model. Lembaga Penelitian Universitas Airlangga Surabaya.

Arikunto, S. (2010). Prosedur penelitian suatu pendekatan praktek edisi revisi IV. Rineka Cipta.

Azhar, F. (2015). Assessing students’ learning achievement: An evaluation. Mediterranean Journal of Social Sciences, 6(2), 535-540. https://doi.org/10.5901/mjss.2015.v6n2p535

Azwar. (2017). Reliabilitas dan validitas (Edisi 4). Pustaka Pelajar.

Barnard, L., Lan, W.Y., To, Y.M., Paton, V.O., & Lai, S.L. (2009). Measuring self-regulation in online and blended learning environments. Internet and Higher Education, 12(1), 1-6. https://doi.org/10.1016/j.iheduc.2008.10.005

Beaton, D.E., Bombardier, C., Guillemin, F., & Ferraz, M.B. (2000). Guidelines for the process of cross-cultural adaptation of self-report measures. Spine, 25(24), 3186-3191. https://journals.lww.com/spinejournal/citation/2000/12150/

Broadbent, J., & Poon, W.L. (2015). Self-regulated learning strategies & academic achievement in online higher education learning environments: A systematic review. Internet and Higher Education, 27, 1-13. https://doi.org/10.1016/j.iheduc.2015.04.007

Cazan, A. (2014). Self-regulated learning and academic achievement in the context of online learning environments. The 10 International Scientific Conference E-Learning and Software for Education, Bucharest, July.

Cho, M.H., Kim, Y., & Choi, D.H. (2017). The effect of self-regulated learning on college students’ perceptions of community of inquiry and affective outcomes in online learning. Internet and Higher Education, 34, 10-17. https://doi.org/10.1016/j.iheduc.2017.04.001

Cho, M. H., & Shen, D. (2013). Self-regulation in online learning. Distance Education, 34(3), 290-301. https://doi.org/10.1080/01587919.2013.835770

Chorng, Y.F., Ng, L.Y.A., & Shahabuddin, H. (2019). Improving self-regulated learning through personalized weekly e-learning journals: A time series quasi-experimental study. Journal of Business Education & Scholarship of Teaching, 13(1), 30-45.

Costello, A.B., & Osborne, J. (2005). Best practices in exploratory factor analysis: Four recommendations for getting the most from your analysis. Practical Assessment, Research, and Evaluation, 10(1). https://openpublishing.library.umass.edu/pare/article/id/1650/

Ferdinand, A. (2014). Structural equation modeling dalam penelitian manajemen Aplikasi model-model rumit dalam penelitian untuk skripsi, tesis, dan disertasi doktor. Undip Press.

Fernandes, H.J.X. (1984). Testing and measurement. Evaluation and Curriculum Development.

Field, A. (2013). Discovering statistics using IBM SPSS Statistics. SAGE.

Ghozali, I. (2014). Structural equation modeling, metode alternatif dengan partial least square (PLS). Badan Penerbit Universitas Diponegoro.

Greco, L.M., O'Boyle, E.H., Cockburn, B.S., & Yuan, Z. (2018). Meta‐analysis of coefficient alpha: A reliability generalization study. Journal of Management Studies, 55(4), 583-618. https://onlinelibrary.wiley.com/doi/full/10.1111/joms.12328

Hair, J.F.J., Black, W.C., Babin, B.J., & Anderson, R.E. (2014). Multivariate data analysis. Pearson Prentice Hall. https://doi.org/10.1016/j.foodchem.2017.03.133

Hu, L., & Bentler, P.M. (1998). Fit indices in covariance structure modeling: Sensitivity to under parameterized model misspecification. Psychological Methods, 3(4), 424-453. https://doi.org/10.1037/1082-989X.3.4.424

Ismiyati, I., Kartowagiran, B., Muhyadi, M., Sholikah, M., Suparno, S., & Tusyanah, T. (2021). Understanding students’ intention to use mobile learning at Universitas Negeri Semarang: An alternative learning from home during Covid-19 pandemic. Journal of Educational, Cultural and Psychological Studies (ECPS Journal), 23. https://doi.org/10.7358/ecps-2021-023-ismi

Jansen, R.S., van Leeuwen, A., Janssen, J., Kester, L., & Kalz, M. (2017). Validation of the self-regulated online learning questionnaire. Journal of Computing in Higher Education, 29(1), 6-27. https://doi.org/10.1007/s12528-016-9125-x

Juaninda, C.P., Auliani, C.R., Dumbi, K.F., Dumbi, K.S., Putri, R.N.H., & Safitri, S. (2020). Hubungan antara self-regulated learning (SRL), teaching presence (TP), dan Pencapaian Akademis dalam Skema Pembelajaran Jarak Jauh (PJJ) pada Mahasiswa Universitas Indonesia. Prosiding Konferensi Mahasiswa Psikologi Indonesia 1.0, February, 202-216.

Khoerunnisa, N., Rohaeti, E.E., & Ayu Ningrum, D.S. (2021). Gambaran self regulated learning siswa terhadap pembelajaran daring pada masa pandemi Covid 19. FOKUS (Kajian Bimbingan & Konseling dalam Pendidikan), 4(4), 298-308. http://www.journal.ikipsiliwangi.ac.id/index.php/fokus/article/view/7433

Kizilcec, R.F., Pérez-Sanagustín, M., & Maldonado, J.J. (2017). Self-regulated learning strategies predict learner behavior and goal attainment in Massive Open Online Courses. Computers and Education, 104, 18-33. https://doi.org/10.1016/j.compedu.2016.10.001

Korkmaz, Ö., & Kaya, S. (2012). Adapting online self-regulated learning scale into Turkish. Turkish Online Journal of Distance Education, 13(1), 52-67. https://doi.org/10.17718/tojde.69293

Kumalasari, D., Luthfiyani, N.A., & Grasiawaty, N. (2020). Analisis faktor adaptasi instrumen resiliensi akademik versi Indonesia: Pendekatan eksploratori dan konfirmatori. JPPP — Jurnal Penelitian Dan Pengukuran Psikologi, 9(2), 84-95. https://doi.org/10.21009/jppp.092.06

Littlejohn, A., Hood, N., Milligan, C., & Mustain, P. (2016). Learning in MOOCs: Motivations and self-regulated learning in MOOCs. Internet and Higher Education, 29, 40-48. https://doi.org/10.1016/j.iheduc.2015.12.003

Maldonado-Mahauad, J., Pérez-Sanagustín, M., Kizilcec, R.F., Morales, N., & Munoz-Gama, J. (2018). Mining theory-based patterns from big data: Identifying self-regulated learning strategies in Massive Open Online Courses. Computers in Human Behavior, 80, 179-196. https://doi.org/10.1016/j.chb.2017.11.011

Manurung, R., Sadjiarto, A., & Sitorus, D.S. (2021). Aplikasi Google Classroom sebagai Media Pembelajaran Online dan Dampaknya Terhadap Keaktifan Belajar Siswa pada Masa Pandemi Covid-19. Jurnal Kependidikan: Jurnal Hasil Penelitian dan Kajian Kepustakaan di Bidang Pendidikan, Pengajaran dan Pembelajaran, 7(3), 729-739. https://e-journal.undikma.ac.id/index.php/jurnalkependidikan/article/view/3853

Martinez-Lopez, R., Yot, C., Tuovila, I., & Perera-Rodríguez, V.H. (2017). Online self-regulated learning questionnaire in a Russian MOOC. Computers in Human Behavior, 75(December), 966–974. https://doi.org/10.1016/j.chb.2017.06.015

Mufidah, N.L., & Surjanti, J. (2021). Efektivitas model pembelajaran blended learning dalam meningkatkan kemandirian dan hasil belajar peserta didik pada masa pandemi covid-19. Ekuitas: Jurnal Pendidikan Ekonomi, 9(1), 187-198. https://ejournal.undiksha.ac.id/index.php/EKU/article/view/34186

Pedhazur, E.J. (1997). Multiple regression in behavioral research: Explanation and prediction. Harcourt Brace College Publishers.

Riadi, E. (2018). Statistik SEM dengan LISREL (C.A. Offset (Ed.)).

Sujarweni, V.W. (2018). Panduan mudah olah data structural equation modeling (sem) dengan lisrel. Pustaka Baru Press.

Thompson, B., & Vacha-Haase, T. (2000). Psychometrics is datametrics: The test is unreliable. Educational and Psychological Measurement, 60(2), 174-195. https://doi.org/10.1177/0013164400602002

Vilkova, K., & Shcheglova, I. (2021). Deconstructing self-regulated learning in MOOCs: In search of help-seeking mechanisms. Education and Information Technologies, 26(1), 17-33. https://doi.org/10.1007/s10639-020-10244-x

Wang, C., Schwab, G., Fenn, P., & Chang, M. (2013). Self-efficacy and self-regulated learning strategies for English language learners: Comparison between Chinese and German college students. Journal of Educational and Developmental Psychology, 3(1). https://doi.org/10.5539/jedp.v3n1p173

Wong, T.K.Y., Tao, X., & Konishi, C. (2018). Teacher support in learning: Instrumental and appraisal support about math achievement. Issues in Educational Research, 28(1), 202-219.

Yazid, H., & Neviyarni, N. (2021). Pengaruh pembelajaran daring terhadap psikologis siswa akibat COVID-19. Human Care Journal, 207-213.

Zimmerman, B.J. (1989). A social cognitive view of self-regulated academic learning. Journal of Educational Psychology, 81(3), 329. https://psycnet.apa.org/doi/10.1037/0022-0663.81.3.329

Zimmerman, B.J., & Martínez-Pons, M. (2012). Perceptions of efficacy and strategy use in the self-regulation of learning. In Student perceptions in the classroom (pp. 185-208). Routledge.

Author Notes

Ismiyati Ismiyati is a permanent lecturer at the Department of Office Administration Education, Faculty of Economics and Business, Universitas Negeri Semarang. She holds a doctoral degree in Research and Evaluation Education from Universitas Negeri Yogyakarta in 2025. Her research interests include assessment and evaluation of office administration learning, and educational issues across various contexts. Email: ismiyati@mail.unnes.ac.id (https://orcid.org/0000-0002-4706-1340)

Suranto Aw is a Professor and permanent lecturer at the Faculty of Social and Political Sciences and a faculty member in the Educational Research and Evaluation Department, Graduate School, Universitas Negeri Yogyakarta. He holds a doctoral degree in Research and Evaluation Education from Universitas Negeri Yogyakarta. His research interests include communication program evaluation and educational issues across various contexts. Email: suranto@uny.ac.id (https://orcid.org/0000-0002-7338-8689)

Haryanto Haryanto is an Associate Professor at the Department of Electrical Engineering Education, Faculty of Engineering and a faculty member in the Educational Research and Evaluation Department, Graduate School, Universitas Negeri Yogyakarta, Indonesia. His research focuses on educational evaluation, STEM education, and instructional strategies in technical and vocational education. Email: haryanto@uny.ac.id (https://orcid.org/0000-0003-3322-904X)

Farida Agus Setiawati is a Professor and permanent lecturer at the Faculty of Psychology and a faculty member in the Educational Research and Evaluation Department, Graduate School, Universitas Negeri Yogyakarta. She holds a doctoral degree in Research and Evaluation Education from Universitas Negeri Yogyakarta. Her research interests include psychometrics, measurement in psychology and education. Email: faridaagus@uny.ac.id (https://orcid.org/0000-0003-0099-9179)

Yulia Ayriza is a Professor and permanent lecturer at the Faculty of Psychology and a faculty member in the Educational Research and Evaluation Department, Graduate School, Universitas Negeri Yogyakarta. She completed her undergraduate and master’s education at Universitas Gadjah Mada, majoring in Developmental Psychology, and obtained her doctoral degree in Psychology from the School of Social Sciences, Universiti Sains Malaysia. Her research interests include developmental psychology and educational issues across various contexts. Email: yulia_ayriza@uny.ac.id (https://orcid.org/0000-0002-3623-7742)

Mar'atus Sholikah is a lecturer at Politeknik Balekambang Jepara in Indonesia. Her research interests are in educational management, educational technology, digital learning innovation, and instructional design, with an emphasis on higher and vocational education. Email: maratussholikah@polibang.ac.id (https://orcid.org/ https://orcid.org/0000-0003-4271-4389)

Cite as: Ismiyati, I., Aw, S., Haryanto, H., Setiawati, F.A., Ayriza, Y., & Sholikah, M. (2026). Self-regulated online learning instrument adaptation: A measurement model. Journal of Learning for Development, 13(1), 48-67.